| IN THIS ARTICLE Reading time: 20 minutes

The ninth article of twelve, and the first article of Act IV — The Decision. Acts I through III established orientation, guardrails, and application. Act IV asks the question those three acts have been building toward: how should a Caribbean board actually govern AI? The answer most often given in professional-services literature — a list of principles and a reference to the EU AI Act — is not wrong but it tells a Caribbean director almost nothing about what to do on Monday morning. This article takes the opposite approach: it begins from the specific decisions a Caribbean board, its risk committee, its audit committee, and its management team will face this year, and asks where each decision belongs. By the end of this article you will be able to: 1. Identify the eleven AI-related decisions that, in our engagement experience, every Caribbean board of scale will face in the next two years — and recognise which of them are routinely sent to the wrong body. 2. Apply the D-AGENTICA™ AI Governance Decision-Rights Matrix as the named instrument, mapping each decision to its proper locus across the board, the risk committee, the audit committee, and management. 3. Address the two structural questions most Caribbean boards have not yet faced — whether AI warrants its own board committee, and whether AI risks belong in the existing enterprise risk register or in their own — and understand the considerations that determine the right answer for a given institution. |

The phone call

In late February of this year, the chair of a Caribbean institution’s board of directors telephoned me from her car between meetings. She had, the previous afternoon, chaired a board meeting at which the chief executive had presented an AI strategy paper for board approval. The paper was twenty-four pages long. It contained references to agentic intelligence, large language models, retrieval-augmented generation, and a diagram of a proposed AI capability stack. The board, she said, had asked good questions for forty-five minutes and had ultimately approved the paper unanimously. She was telephoning because she was not at all sure what, exactly, the board had approved — or whether the approval was within the board’s gift in the first place.

Her question, when she finally arrived at it, was the right one and the one most Caribbean board chairs we have spoken with this year are circling without quite asking. “Was that paper for the board,” she said, “or was it for me? Should the decisions in it have been ours to make, or had I just allowed the chief executive to outsource a management decision to a body that does not have the time or the technical foundation to make it well?” The honest answer was that the paper had contained at least four distinct decisions, only one of which properly belonged to the board, two of which belonged to the risk and audit committees, and one of which should have been made by the chief executive without ever being put to a vote at all. The board’s unanimous approval had blurred all four into a single act of governance, and the institution would discover the cost of that blur over the following twelve months in the form of unclear accountability when something went wrong.

I have come to think of this as the central problem of AI governance in the Caribbean boardroom in 2026. It is not a problem of principles. It is a problem of locus. Caribbean boards are not, in general, indifferent to AI; they are intermittently over-involved in operational AI decisions they have no business making and intermittently under-involved in strategic AI decisions they cannot delegate. The single most useful exercise a Caribbean board can do this year is to specify, decision by decision, where each AI-related decision properly belongs. This article is about how to do that.

Where we are in the series

Eight articles have come before this one. Article 1 established the agentic decade and offered the Three-Question Board Diagnostic. Article 2 separated AI from the vendor noise and offered the Agentic Vendor Assessment. Article 3 set out the productivity economics for Caribbean SMEs with the SME AI Sequencing Framework. Articles 4 and 5 — Acts II’s guardrails — gave us the Data Sovereignty Decision Matrix and the Caribbean Regulatory Readiness Self-Assessment. Articles 6, 7, and 8 constituted Act III: the Financial Services AI Use-Case Map, the Caribbean Workforce Transition Map, and the Finance Function AI Maturity Model. Eight named instruments. Each one a tool for a specific decision context. The series has, with this article, exhausted what we can usefully say about AI in the abstract and turned to the concrete question of how Caribbean institutions ought to be governed in light of all of it.

Act IV — The Decision — comprises the final four articles. Article 9, this one, takes up the AI governance question directly. Article 10 will offer sector spotlights — short readings on what AI adoption looks like in Caribbean tourism, manufacturing, and the public sector specifically. Article 11 unveils the comprehensive D-AGENTICA™ Maturity Model, of which the Finance Function model in Article 8 was the first domain instance. Article 12 closes the series with the call to the Caribbean boardroom that the entire programme has been building toward. The four articles constitute, taken together, the Dawgen Global position on what good AI governance in the Caribbean should look like. We hope they are useful; we know they are timely.

The eleven AI decisions a Caribbean board will face

The most common defect in Caribbean boardroom AI discussions is that the participants are not, in fact, discussing the same decision. The phrase “approving the AI strategy” can mean any of half a dozen different things depending on who is using it — and most board minutes do not distinguish between them. The remedy is to begin with a list. In our engagement experience, every Caribbean board of scale will face, over the next two years, some version of the following eleven AI-related decisions. They are not all of the same kind. They do not all belong to the same body. The board that confuses them will govern AI badly; the board that distinguishes them will govern AI well. This is a more important point than it sounds.

The first decision is whether the institution should have an AI strategy at all, and if so, with what level of ambition relative to peers. This is a posture decision, not a content decision; it sits squarely with the board because no other body has the authority to commit the institution to a strategic direction or to decline to. Caribbean boards too often skip this decision and proceed directly to approving the strategy document — which means the question of whether to pursue AI at all has been answered tacitly, by management, in the act of preparing the paper. The second is approval of the AI strategy document itself, once management has prepared it. This is a content decision and a board approval decision; the board reads the document and gives or withholds its imprimatur. The third is approval of the budget envelope for AI — the cumulative annual spend the institution is willing to authorise across all AI initiatives. This is a financial decision and properly a board approval; in our experience, this is the decision Caribbean boards approve in the most blurred way, with budget figures embedded inside strategy documents rather than separately stated. The fourth is approval of specific use cases within that envelope: the underwriting model, the customer-service agent, the audit-team AI tool. This is operational rather than strategic and properly belongs at the risk-committee level, with the board reviewing rather than approving. The fifth is the choice of vendors and partners, and the decision whether to build, buy, or co-develop with a Big Four advisory or a regional technology firm. This is a procurement decision, properly management’s, with the board informed where the financial scale or the strategic exposure is material.

The sixth decision is the data architecture of the institution’s AI deployment — where data is stored, in what jurisdiction, under whose control, with what residency rights, accessible to which systems. Article 4’s data sovereignty work bears on this; the decision is technical in detail but strategic in implication, and properly sits with the risk committee. The seventh is the AI risk appetite statement — how much variance in AI-mediated outcomes the institution will tolerate, and against what categories of consequence. This is one of the two decisions the board most clearly owns and most often does not realise it owns. A risk appetite statement that has not been authored at board level is not a risk appetite statement; it is a management preference that has been allowed to substitute for governance. The eighth is the control framework — what controls exist around AI systems, what testing and monitoring is in place, and who reviews them. This is operational detail, properly the risk committee’s territory, with the audit committee independently verifying. The ninth is the workforce position — whether the institution’s AI strategy implies redundancies, redeployment, or net hiring, and on what timetable. Article 7 took up this question as substance; here the question is governance, and the workforce position is one the board reviews rather than owns, except where the human-resource implications are material enough to constitute a strategic choice in their own right. The tenth is disclosure — what the institution will say about its AI use to its regulators, its auditors, its customers, and its public. Disclosure is reputational and legal; it sits with the board because no other body has the authority to commit the institution’s voice. The eleventh is crisis response — the playbook for what happens when an AI system causes a public failure, a regulatory challenge, a customer complaint, or an audit finding. The board owns the design of crisis response; management owns its execution; the boundary between the two is itself a governance decision.

Eleven decisions. Distinct. Routinely conflated. The next section maps each to where it belongs.

The D-AGENTICA™ AI Governance Decision-Rights Matrix

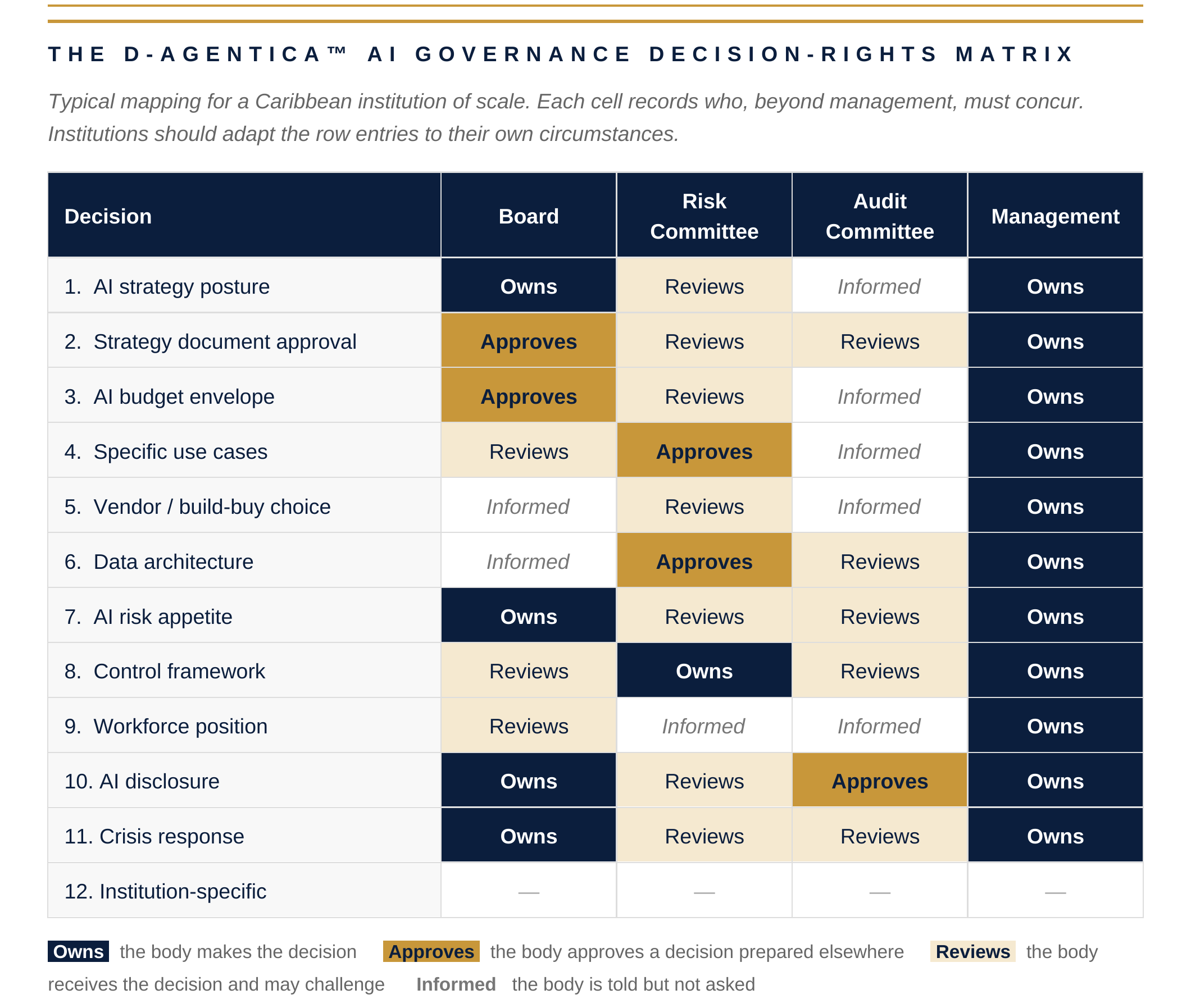

The named instrument of this article is the D-AGENTICA™ AI Governance Decision-Rights Matrix. It is a four-column instrument: the columns are the Board, the Risk Committee, the Audit Committee, and Management. The rows are the eleven decisions above, plus a twelfth row reserved for institution-specific decisions that the matrix surfaces but does not pre-name. Against each decision, the matrix records one of four entries: Owns (the body makes the decision), Approves (the body approves a decision prepared elsewhere), Reviews (the body receives the decision and may challenge but not block), or Informed (the body is told but not asked).

We are deliberate about this four-state vocabulary, which differs slightly from the RACI conventions some institutions are familiar with. The classical RACI categories — Responsible, Accountable, Consulted, Informed — work poorly for AI governance because they conflate the preparation of a decision with its approval, and they do not distinguish between an active approval right and a review-with-challenge right. AI governance demands the distinction: there are decisions a board should approve actively (a strategy, a budget envelope, a risk appetite statement) and decisions a board should review without owning (specific vendor selections, technical architecture choices). Conflating the two produces either rubber-stamp boards or over-reaching boards, both of which are pathologies.

Three observations about the populated matrix above are worth making, because they are the substance behind the cell entries. First, the board’s own column is not uniformly heavy — and this is correct. A board that Owns every decision is a board that is over-reaching; a board that Owns only the genuinely strategic decisions (posture, risk appetite, disclosure, crisis-response design) and Approves the two financial-strategic decisions (strategy document, budget envelope) is a board doing its proper work. The remaining decisions belong below board level. Second, the Risk Committee owns control framework decisions and approves use-case and data architecture decisions; this concentration of authority below the board is deliberate and is the principal reason a separate AI committee is unnecessary in most Caribbean institutions, a point we return to in the next section. Third, the Management column reads uniformly Owns — and this is also correct, because the matrix’s question is not who is responsible (management always is) but who, beyond management, must agree before the decision is taken or the action executed. This is the part of governance most commonly missed: management is responsible for everything; the matrix answers who else must concur.

| WHAT WE OBSERVE ACROSS CARIBBEAN BOARD ENGAGEMENTS

In our experience working with Caribbean boards on AI governance over the past eighteen months, the most common defect is not the absence of governance but its concentration. A single agenda item — “AI strategy approval” — bundles four to seven of the eleven decisions above into one vote. The board votes once and experiences itself as having governed; the institution records a board approval and experiences itself as having been governed. Twelve months later, when a specific AI-mediated outcome produces a regulatory question or a customer complaint, no one — including the board chair — can identify which decision authorised the specific behaviour at issue. The remedy is not more meetings or longer papers. It is *disaggregation*. A single AI strategy presentation should produce three to five distinct, separately minuted board decisions, each tied explicitly to a row of the Decision-Rights Matrix. |

Two structural questions Caribbean boards are not yet asking

The matrix above answers the question of where AI decisions belong. There are two further questions, structural rather than procedural, that most Caribbean boards have not yet faced. They will face them in the next twelve to twenty-four months, and the boards that have thought about them in advance will be at a meaningful advantage to those that encounter them cold.

Does AI need its own board committee?

The first structural question is whether the AI portfolio is large enough, complex enough, or strategically central enough to warrant its own board committee — separate from the existing risk committee, audit committee, and remuneration committee. Several large institutions globally have established AI committees or technology committees over the past two years; the question is whether Caribbean institutions should follow.

Our position, after engagement experience with several Caribbean boards on this specific question, is no, but with conditions. For a Caribbean institution at the scale of even the largest banks, manufacturers, and conglomerates in the region, AI is not yet a category of board oversight that warrants its own standing committee. The volume of decisions is not sufficient to justify the meeting cadence; the technical specialism among Caribbean directors is not yet deep enough to populate a committee that would do its work credibly. However, this answer has a shelf life. The conditions under which a separate AI committee becomes the right answer are: either the institution’s AI portfolio reaches a scale where it constitutes more than fifteen percent of the technology budget, or the institution operates in a regulated sector where AI-specific regulation has become substantive enough to demand specialist board oversight, or the institution has experienced an AI-related public failure of sufficient seriousness to require visible structural response. Until one of those conditions obtains, AI governance properly sits within the risk committee, with explicit reporting lines to the board and to the audit committee. We expect at least three Caribbean institutions to cross one of those thresholds in the next twenty-four months. Boards should be ready.

Where do AI risks belong in the risk register?

The second structural question is whether AI-related risks should be integrated into the institution’s existing enterprise risk register — alongside operational risk, credit risk, market risk, regulatory risk — or whether AI deserves its own risk category.

Our position here is that AI is not a separate risk category, and treating it as one produces worse governance, not better. The risks AI creates are not a new species; they are operational risks (model failure, vendor concentration), regulatory risks (compliance breach, disclosure failure), reputational risks (public AI incident), strategic risks (capability lag relative to peers), and human-capital risks (workforce transition). Integrating these into the existing categories forces the risk committee to think about AI in terms it already knows how to govern. Treating AI as a separate category creates a risk silo that neither the chief risk officer nor the risk committee chair has the technical foundation to govern well, and that produces the worst of both worlds: visible governance theatre and invisible substantive risk. The remedy is to treat the existing risk register as the structure and to insist that every AI-related risk is recorded in its proper underlying category, with an explicit AI-attribution tag. This produces a register that the risk committee can govern using the disciplines it already has, and that allows AI-related risk to be reported across categories when the board asks for it.

What the external auditor is now looking for

The third concrete differentiator of this article is the section most Caribbean boards have not yet been told about and that the larger audit firms — globally and, increasingly, in the Caribbean — are now operationalising. The external auditor’s annual review of an institution’s governance arrangements is, for the first time, including AI governance as a specific line of inquiry. This is a quiet but important shift, and it has consequences.

The auditor will now ask, of any institution with material AI deployment, four specific questions. First: does the board have a documented position on AI governance? If the answer is no, that is itself a finding. Second: is there a decision-rights mapping that distinguishes board-level from management-level AI decisions? If the answer is no, the auditor will note the absence. Third: does the audit committee receive a regular report on AI controls, separate from the general controls report? And fourth: has management identified the AI-related risks in the enterprise risk register, and are those risks being actively monitored? These four questions are not yet universal across Caribbean audit engagements. They will be by the end of 2026.

The implication for the board chair is that AI governance is no longer a question of internal hygiene; it is becoming a question of external evidence. A board that has done the decision-rights work, that has integrated AI risks into the enterprise register, that has commissioned a quarterly AI controls report through the audit committee, will be able to evidence its AI governance to its auditor as a matter of course. A board that has not done this work will, beginning some time in the next audit cycle, find itself answering questions for which the answers do not yet exist. The work to prevent that situation is not heavy. It is the work this article has described.

A specific note for institutions whose external auditor is not a Big Four firm — which is the majority of Caribbean institutions of scale. The trajectory of audit AI-governance review is the same regardless of audit firm tier; the difference is timing. The Big Four are deploying AI governance review now; the larger second-tier and regional firms are following on a roughly twelve-to-eighteen-month lag. This is not a respite. It is a window. Institutions that use the next twelve months to put their AI governance house in order will be ready when their own auditor begins the review; institutions that do not will discover the gap when the question is asked rather than before. The board chair who understands this distinction has, in 2026, a specific strategic advantage available to her: time to do the work properly rather than reactively.

| WHAT WE OBSERVE ACROSS RECENT AUDIT GOVERNANCE REVIEWS

Of the audit governance reviews Dawgen Global has conducted in the past nine months for Caribbean institutions of scale, every single one identified at least one AI governance gap that the institution had not previously flagged itself. The pattern is consistent: the chief executive has commissioned AI work; some of it has reached production; the board has approved an AI strategy at a high level; the decision-rights mapping below the strategy has not been done; the risks have not been added to the enterprise register; the audit committee has not received a separate AI controls report. Each of these gaps is closeable with one or two board cycles of focused attention. None requires new technology. All require the board chair to put AI governance on the agenda and keep it there until the disaggregation is complete. |

What the board chair should do this year

The article has now covered ground enough to be specific about what a Caribbean board chair should commit to in the next ninety days, and what the board should be in a position to evidence by the end of the year. As in Article 8, we are deliberate about the sequencing; doing these in the wrong order produces the appearance of governance without its substance.

First, the chair should commission, jointly with the chief executive and the company secretary, a one-page application of the D-AGENTICA™ AI Governance Decision-Rights Matrix to the institution’s specific circumstances. The matrix should be filled in honestly; the rows that surface ambiguity should be flagged for board discussion rather than papered over. The completed matrix becomes the institution’s AI governance reference document, and is appended to the board’s terms of reference.

Second, the chair should put on the next board agenda a single specific item: disaggregation of the AI strategy approval. The intent is not to revoke any previously granted approval but to specify, decision by decision, what was actually approved and what remains an open management matter requiring further board, risk committee, or audit committee action.

Third, the chair should request, through the audit committee, that AI risks be integrated into the enterprise risk register at the next quarterly risk review, with explicit AI-attribution tags applied to the relevant existing categories. The chair should not allow the creation of a separate AI risk silo.

Fourth, the chair should request, through the audit committee, a quarterly AI controls report from management — distinct from the general controls report, covering at minimum the AI use cases in production, the controls in place, the exceptions identified, and the actions taken. This becomes a standing item in the audit committee cycle.

By the end of 2026, a board chair who has commissioned this work will be able to say, of her institution: we have a documented decision-rights mapping for AI; we have disaggregated our prior strategy approval into its constituent decisions; we have integrated AI risks into the enterprise register without creating a silo; we have a quarterly audit committee report on AI controls. That is a different governance position from the one most Caribbean boards occupy as of writing. It is achievable in two board cycles. It does not require new technology, new committees, or new advisers. It requires disaggregation and attention, which are within every board chair’s gift.

Closing reflection — and what comes next

Article 9 has opened Act IV by anchoring AI governance not in principles but in decision rights. The premise is simple and we hope provocative: most Caribbean boards are not failing at AI governance because they are indifferent to it; they are failing because they are governing the wrong things at the wrong level. The Decision-Rights Matrix is the instrument that makes the right level visible. The board chair is the person who can make it operational.

Article 10 turns from the structural question of governance to the contextual question of sector. The AI adoption profile of a Caribbean tourism group is not the AI adoption profile of a Caribbean manufacturer; the AI adoption profile of a Caribbean public-sector entity is different again. We will offer three sector spotlights — short, specific, opinionated — that make the contextual differences visible. Article 11 will then unveil the comprehensive D-AGENTICA™ Maturity Model, across all domains, with the Finance Function model from Article 8 as its first domain instance. Article 12 closes the series with the call to the Caribbean boardroom that we have been building toward across all twelve. We are now, in other words, three articles from the close of the programme. The work after that will be yours.

| FOR THE BOARD AGENDA

This article has specified, through the eleven decisions, the named matrix, the two structural questions, and the four audit-review questions, what Caribbean boards should expect of themselves on AI governance this year. A board chair, lead independent director, or senior committee chair reading this article has earned the right to ask their leadership team one specific question and to propose one specific decision that will materially improve the position over the next ninety days. THE QUESTION Within ninety days, can the company secretary, working with the chief executive and the chief risk officer, present to the board a completed D-AGENTICA™ AI Governance Decision-Rights Matrix specific to this institution — together with a disaggregated record of which AI decisions the board has previously made, which remain open at management level, and which require fresh board, risk committee, or audit committee action? THE DECISION That, by the end of the next quarter, the board’s terms of reference will be amended to incorporate the AI Governance Decision-Rights Matrix as an appendix; that AI risks will be integrated into the enterprise risk register with explicit attribution tags rather than treated as a separate category; and that the audit committee will begin receiving a standing quarterly AI controls report distinct from the general controls report. |

ABOUT THE AUTHOR

Dr. Dawkins Brown is the Executive Chairman and Founder of Dawgen Global. He holds a PhD and the MCMI and ACFE designations, with twenty-three-plus years of professional experience including a prior career at Ernst & Young before founding Dawgen Global. He writes the LinkedIn newsletter Caribbean Boardroom Perspectives and serves as Executive Chairman of Business Access Television.

About Dawgen Global

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements