| IN THIS ARTICLE Reading time: 24 minutes

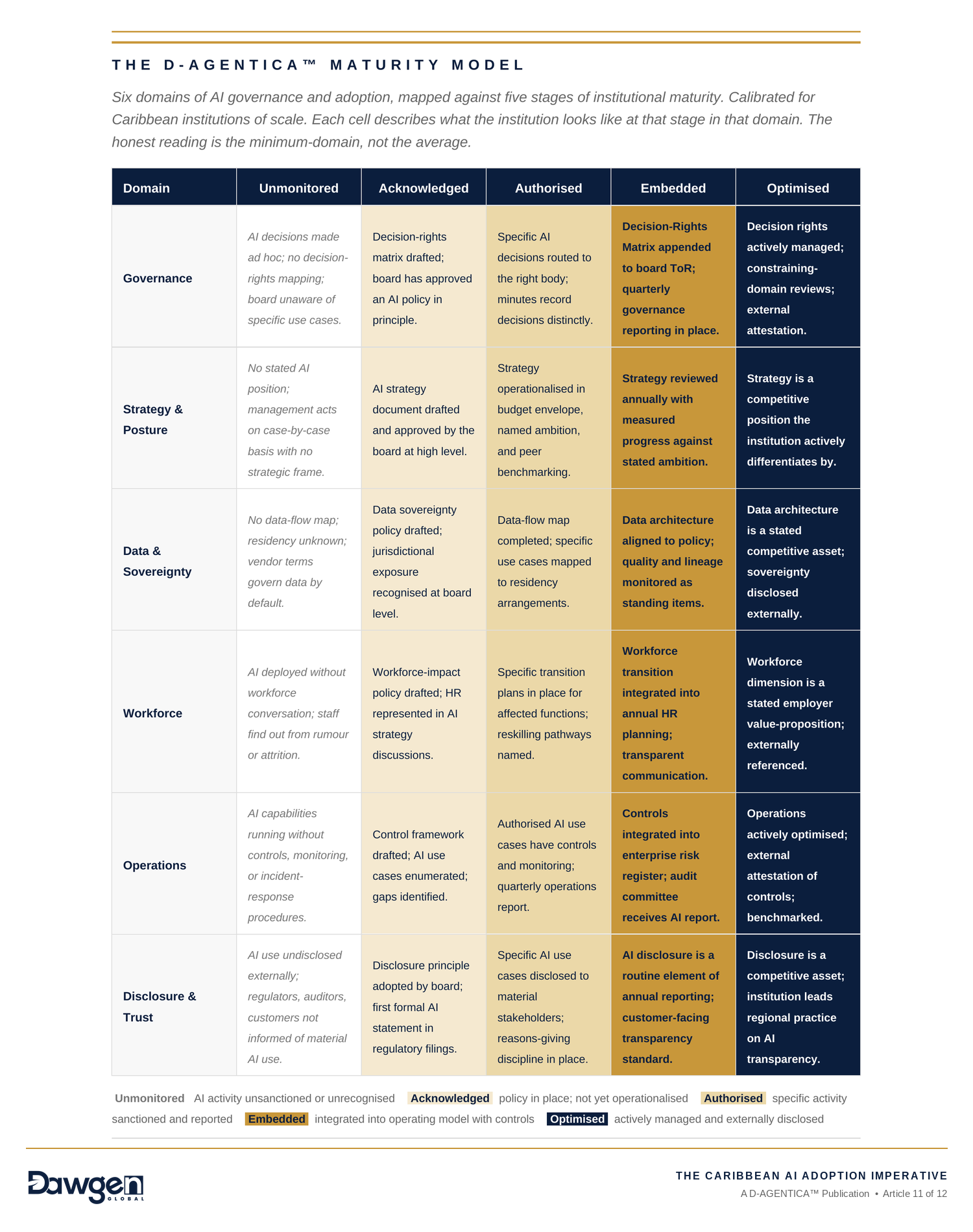

The eleventh article of twelve, and the third article of Act IV — The Decision. Articles 1 through 10 have, deliberately, been building the components of an instrument: the governance frame, the data sovereignty discipline, the workforce transition map, the finance function ladder, the decision-rights matrix, the sectoral profile. Each was useful in itself; each was also an element of something larger. Article 11 is where the elements are assembled. The comprehensive D-AGENTICA™ Maturity Model is introduced here — six domains, five stages, thirty cells of substantive content — as a self-assessment instrument any Caribbean board of scale can apply to its own institution. The article is longer than the others by a small margin because the instrument warrants it. By the end of this article you will be able to: 1. Recognise the six domains across which AI maturity properly varies — Governance, Strategy & Posture, Data & Sovereignty, Workforce, Operations, and Disclosure & Trust — and the substantive content of each that distinguishes it from the others. 2. Apply the D-AGENTICA™ Maturity Model as the named instrument, locating your institution at one of five stages — Unmonitored, Acknowledged, Authorised, Embedded, or Optimised — across each of the six domains, producing a six-point profile that surfaces both strengths and gaps. 3. Read the resulting profile honestly: a board should expect uneven maturity across domains, accept that the lowest-domain score is the constraining factor on overall AI governance quality, and recognise that the work this year is to advance the lowest one or two domains rather than to advance every domain incrementally. |

What the series has been building toward

Ten articles have come before this one. They have, deliberately, been building the components of an instrument. The reader who has been with us throughout the series will have noticed: each named tool was useful for the article in which it appeared, and also, separately, was a fragment of something larger. The Three-Question Board Diagnostic from Article 1, the Agentic Vendor Assessment from Article 2, the SME AI Sequencing Framework from Article 3, the Data Sovereignty Decision Matrix from Article 4, the Caribbean Regulatory Readiness Self-Assessment from Article 5, the Financial Services AI Use-Case Map from Article 6, the Caribbean Workforce Transition Map from Article 7, the Finance Function AI Maturity Model from Article 8, the AI Governance Decision-Rights Matrix from Article 9, and the Sector AI Adoption Profile from Article 10 — ten instruments across ten articles, each addressing a discrete decision context, each carrying its own analytical weight. Article 8 named explicitly what the series has been building toward: a comprehensive Maturity Model of which the Finance Function model was the first domain instance. Article 11, this article, is where that comprehensive instrument is unveiled.

We have held the comprehensive model back to its proper place in the series architecture for two reasons. First, because a Maturity Model presented before the substantive content that fills its cells reads as a diagram rather than as a tool. The Article 9 matrix could only carry the weight it carried because the preceding eight articles had given the reader the substantive vocabulary the matrix’s cells expected; the same logic applies, more strongly, to a thirty-cell comprehensive model. Second, because the discipline of building an instrument out of substantive content rather than diagramming it from the start produces an instrument that is load-bearing — that can withstand the weight of the assessment it asks Caribbean boards to make. A diagram drawn first and filled later does not bear that weight; it collapses under the first hard question.

Where we are in the series

Acts I through III gave us orientation, guardrails, and application. Act IV — The Decision — comprises four articles. Article 9 set out the governance frame in the abstract through the Decision-Rights Matrix. Article 10 contextualized the frame against three Caribbean sectors through the Sector AI Adoption Profile. Article 11, this one, unveils the comprehensive Maturity Model. Article 12 will close the series with the call to the Caribbean boardroom that the entire programme has been building toward. We are, in other words, one article from the close. Article 11 is therefore the last article of substantive instrumentation; Article 12 will not introduce new named instruments but will consolidate, reflect, and call to action.

Six domains of AI maturity

Maturity in AI governance and adoption is not a single dimension. An institution can be sophisticated in one respect and unmonitored in another, and the gap between the two is the substantive subject of governance. The comprehensive D-AGENTICA™ Maturity Model identifies six domains across which AI maturity properly varies. The domains are not arbitrary; they correspond to the substantive areas the series has worked through across its first ten articles, and they are the surfaces on which AI governance work concretely lands. Each domain is briefly described below; the populated maturity model that follows specifies the five stages within each.

Governance

The body of decision rights and oversight arrangements that determine who, above management, must concur on each AI-related decision. This domain synthesises Article 9’s eleven decisions and the Decision-Rights Matrix; the maturity question is not whether the institution has any AI governance but whether the governance is operationalised at the level of specific decisions, with documented decision rights and an audit trail.

Strategy and posture

The institution’s stated and effective position on AI relative to peers, competitors, and the regulatory landscape — the substantive answer to the first decision in Article 9’s matrix. Maturity here is not measured by ambition; it is measured by clarity. An institution can have a deliberately conservative posture and be mature; an institution with a stated ambitious posture but no operational follow-through is not.

The most common failure mode in this domain is aspirational drift. The institution adopts a stated AI strategy that is more ambitious than its operational capacity to deliver, then quietly under-invests in the strategy’s infrastructure while continuing to communicate the original ambition externally. The gap between stated ambition and operational reality grows over time; eventually the institution finds itself either visibly behind its peers or revising its public communication downward, and either resolution carries a credibility cost that could have been avoided by a more honest initial calibration. The mature institution states what it can deliver and delivers it, regardless of whether that posture is conservative or ambitious.

Data and sovereignty

The data residency, quality, lineage, and architectural arrangements that support — or fail to support — AI deployment. This domain synthesises Article 4’s data sovereignty work and Article 10’s data-flow-map recommendation. Maturity here is the gap between what management believes about its data architecture and what is actually true; in our engagement experience, this is the single domain where the gap is widest in Caribbean institutions.

The most common failure mode in this domain is vendor-disclosure assumption — the assumption that because the institution’s AI capability comes through an international vendor, the vendor is responsible for the data’s jurisdictional treatment. This assumption is almost always wrong. Vendor terms of service typically assign data-protection responsibility back to the institution that bought the service, even where the vendor’s own operations are the proximate cause of the data crossing a jurisdictional boundary. The mature institution maintains its own documented data-flow map regardless of vendor representations, and treats the vendor’s terms of service as a starting point for negotiation rather than a conclusion. The gap between Acknowledged and Authorised in this domain is, more than in any other, a question of whether the institution has done its own work or delegated the work to a vendor that does not, in fact, accept it.

Workforce

The transition discipline by which the institution addresses the implications of AI for its people — redundancy avoidance, redeployment, capability building, transition support. This domain synthesises Article 7’s Workforce Transition Map. Maturity here is the difference between an institution that is having the workforce conversation candidly with its people and one that is allowing AI to happen to its people.

Operations

How AI is actually run inside the institution: the controls, the monitoring, the incident response, the auditability of specific use cases. This domain synthesises the controls discipline central to Article 8’s Finance Function model and Article 9’s audit-review section. Maturity here is the most technical of the six and the easiest to misjudge from the boardroom; an institution can have a sophisticated AI capability and immature operations around it.

Disclosure and trust

The institution’s communication of its AI use to its regulators, its auditors, its customers, and its public — and the legitimacy this communication earns or fails to earn. This domain synthesises Article 9’s disclosure decision and Article 10’s public-legitimacy-stake dimension. Maturity here is rising fastest of the six in 2026 and is, for many Caribbean institutions, the domain in which the next twelve months will produce the most visible movement either way.

Five stages of AI maturity

Within each domain, the model uses the five-stage ladder we established in Article 8’s Finance Function instance: Unmonitored, Acknowledged, Authorised, Embedded, Optimised. The stages are calibrated separately for each domain — what Authorised means in the Governance domain is not what Authorised means in the Operations domain — but the underlying progression is the same. The ladder is deliberate about its starting point: Unmonitored is not a euphemism for not yet started. It is an active state, in which AI activity exists but the institution either does not know about it or has chosen not to govern it. Many Caribbean institutions of scale will discover, on honest application of the model, that they are at Unmonitored in domains they had assumed were further along.

Acknowledged is the stage at which the institution has recognised the domain as a governance subject and has put a policy or position in place, even if the policy is not yet operationalised. Authorised is the stage at which specific activity is sanctioned, measured against the policy, and reported. Embedded is the stage at which the activity is integrated into the institution’s operating model with controls and a regular reporting cadence. Optimised is the stage at which the institution actively manages the domain to improve outcomes and discloses its arrangements externally as a matter of course. We do not present the ladder as a forced sequence — an institution can be at Embedded in Operations and Acknowledged in Workforce, and that combination is governable; but moving from Unmonitored to Acknowledged in any domain requires a deliberate institutional act, and Acknowledged without subsequent advancement becomes a governance failure of its own kind, where policy exists without practice.

The D-AGENTICA™ Maturity Model

The named instrument of this article, and of the series, follows. The model is a six-domain by five-stage matrix; each cell records, in a phrase, what the institution looks like at that stage in that domain. The phrases are calibrated for Caribbean institutions of scale and reflect engagement patterns we have observed across our advisory practice. An institution applying the model honestly will find itself at different stages in different domains, and that variance is itself the substance of the assessment. A flat profile across all six domains is unusual and, when it does occur, is almost always a sign that the assessment has been made too generously rather than that maturity is actually uniform.

Three observations about reading the populated model. First, the model is deliberately written in short phrases per cell — a phrase rather than a paragraph — because the substantive content of each cell is meant to be recognisable rather than read in detail. A board chair who reads the Governance row should be able to identify her institution’s stage in seconds, not minutes. Second, the Optimised column is calibrated for what is achievable in 2026 by a Caribbean institution of scale, not for some international best-practice standard. Optimised in Caribbean public-sector AI is not the same as Optimised in a US-headquartered global bank, and the model is not pretending otherwise. Third, the populated phrases are intended as prompts for the institution’s own honest characterisation; an institution applying the model should expect to write its own one-sentence characterisation of its stage in each domain rather than simply pointing to the cell text.

| WHAT WE OBSERVE WHEN CARIBBEAN BOARDS APPLY THE MODEL HONESTLY

Across the engagements in which Dawgen Global has supported a Caribbean board in applying an early version of this model, three patterns recur. The first is that the *Operations* domain is almost always rated more generously than it should be; boards see the AI capability rather than the controls around it. The second is that the *Disclosure and trust* domain is almost always rated less generously than it should be; boards see the absence of formal AI disclosure as a deficiency without recognising that the absence is itself common across the sector and not, by itself, a maturity gap. The third is that the *Workforce* domain is consistently the domain in which the gap between the rating the management team gives and the rating the board would give if asked separately is widest. The pattern across all three is that the honest application of the model surfaces gaps the institution had not internally surfaced before, which is the single most useful thing the model can do. |

How to apply the model — the constraining-domain principle

The temptation, on receiving an instrument like this one, is to commission a comprehensive assessment that produces a stage rating in every domain, average them, and treat the average as the institution’s AI maturity score. We advise against this approach. The right reading of a six-domain profile is not the average; it is the minimum. The lowest-rated domain is the constraining factor on the institution’s overall AI governance quality, because the institutional consequences of an AI failure flow from the weakest domain regardless of how strong the others are. An institution that is Embedded in Governance, Strategy, Data, Operations, and Disclosure but Unmonitored in Workforce will, when the workforce question becomes a public issue, find that its strength in the other five domains does not protect it. The reverse is also true: a single weak domain can make all the other domains’ work look retrospectively like governance theatre.

The implication for the board chair is that the right work to commission, after applying the model, is not a comprehensive multi-domain advancement programme. It is a focused programme to advance the lowest one or two domains by one stage. Moving Workforce from Unmonitored to Acknowledged is more institutionally valuable than moving Governance from Embedded to Optimised. This is the constraining-domain principle, and it is the single most important reading discipline this article asks of its readers.

There is one circumstance in which the constraining-domain principle does not apply, and it is worth flagging. Where an institution is regulator-mandated to be at a specific stage in a specific domain — for example, a regulated financial institution facing supervisory expectations on AI controls in Operations, or a public-sector entity facing a legal duty in Disclosure and trust — the regulator’s required stage is the floor for that domain regardless of the constraining-domain reading. The right approach is to satisfy the mandated floor first and then return to the constraining-domain principle for non-mandated work. This is rarely a difficult prioritisation in practice; what matters is recognising that regulatory floors and internal constraining domains are different categories and should not be conflated.

How the Maturity Model relates to the prior ten instruments

The comprehensive Maturity Model is not a replacement for the ten instruments that preceded it; it is an index against them. Each domain in the model points back to the substantive content of one or more prior articles, and the right way to use the model is in conjunction with those prior tools rather than instead of them. A board that has applied the model and located itself at Acknowledged in Governance should return to Article 9’s Decision-Rights Matrix to specify which of the eleven decisions are at what stage of operationalisation. A board that is Authorised in Data and Sovereignty should return to Article 4’s Data Sovereignty Decision Matrix to audit specific data flows. A board that is Embedded in Operations should return to Article 8’s Finance Function Maturity Model to apply the same five-stage ladder at the level of specific AI categories within that domain.

The Maturity Model, in other words, is the instrument by which a Caribbean board navigates the rest of the series. The series has, with this article, completed the substantive instrumentation it set out to provide. Article 12, the closing article, will not introduce further instruments; it will offer the call to the Caribbean boardroom that ties the eleven instruments together into a coherent programme of work.

On the honest limits of the model

An instrument of this scope warrants a section addressing what it does not do. We are deliberate about this; a Maturity Model presented without its limitations becomes a fetish object, treated as more authoritative than any general-purpose framework can honestly be. Three limits are worth stating openly.

First, the model does not produce a score. It produces a profile — a stage rating in each of six domains — and the profile is the instrument’s output. The temptation to convert the profile into a single number, by averaging or by weighting, should be resisted. A weighted average across six domains loses the constraining-domain information that is the substantive value of the assessment. Boards that have used the model successfully in our engagement experience have resisted the natural pressure to present a single AI-maturity score to the board pack; boards that have produced a single score have, in every case we have observed, regretted it within two or three reporting cycles, because the score either flatters the institution in a way that delays necessary work or maligns it in a way that demoralises the team. The profile is the right output.

Second, the model is calibrated for Caribbean institutions of scale, and it is not equally applicable everywhere within that population. A small Caribbean SME — fewer than fifty employees, single-territory, owner-operated — does not need a six-domain assessment; the SME-specific instruments from Article 3 are the right tool. A very large Caribbean conglomerate or a regional bank with substantial extra-regional operations may find the model underspecified in its lower-right cells, where the Optimised descriptions are calibrated for the largest credible Caribbean institution rather than for global best practice. The model is designed for the middle of the Caribbean institutional landscape — institutions of meaningful scale but operating primarily within Caribbean territories — and serves that population well. Outside that population, it is a starting point that warrants adjustment rather than a final calibration.

Third, the model assesses current state rather than trajectory. An institution at Acknowledged in Workforce that is actively moving toward Authorised through a programme of work is in a meaningfully better position than an institution at Acknowledged in Workforce that has been stationary at that stage for two years, but the model does not surface the difference. The right complement to the Maturity Model assessment is therefore a trajectory annotation — a one-sentence note per domain recording whether the institution is advancing, stationary, or sliding back. Boards that have applied the model with a trajectory annotation alongside have, in our experience, found the resulting profile materially more useful than the static stage rating alone. The annotation is not part of the instrument as designed but is a discipline we recommend institutions add when they apply it.

| THE MOST COMMON HONEST RESULT

When a Caribbean board of scale applies the comprehensive Maturity Model honestly for the first time, the modal result we have observed is the following: *Acknowledged* in Governance, *Acknowledged* in Strategy and Posture, *Unmonitored* in Data and Sovereignty, *Unmonitored* in Workforce, *Authorised* in Operations, *Acknowledged* in Disclosure and Trust. The constraining domains are Data and Workforce, both at *Unmonitored*. The operational domain is the most advanced, reflecting where management has been most willing to invest. The disclosure domain is at policy but not at practice. This profile is not a failing profile; it is the typical profile of a Caribbean institution that has been thinking seriously about AI for twelve to eighteen months. The work that follows is the constraining-domain work — Data and Workforce — by the principle established above. |

What the board chair should do this year

The article has now covered ground enough to be specific about what a Caribbean board chair should commit to in the next ninety days as the operationalisation of this article. Three steps.

First, the chair should commission, jointly with the chief executive and the chief risk officer, an honest application of the Maturity Model to the institution. This is a one-day exercise, not a multi-week consulting engagement; the model is designed for self-assessment and the right people to apply it are the institution’s own senior leadership team in a single deliberate sitting. The output is a six-row profile with a one-sentence characterisation of the institution’s stage in each domain. The profile becomes an appendix to the board’s terms of reference and is reviewed annually.

Second, the chair should identify the constraining domain — the lowest-rated domain in the profile — and commission a programme of work to advance that domain by one stage over the next twelve months. The programme should be specific: defined deliverables, named owner, quarterly reporting to the board. The chair should resist the natural temptation to commission advancement programmes in every domain at once; the constraining-domain principle does the prioritisation work.

Third, the chair should integrate the Maturity Model into the institution’s annual governance cycle alongside the existing risk register, audit committee report, and strategic plan. The model is a standing instrument, not a one-time exercise; the right cadence is annual reapplication with interim reporting on constraining-domain advancement. This integration ensures that AI maturity becomes a tracked institutional metric rather than a one-off conversation that fades.

By the end of 2026, a Caribbean board chair who has commissioned this work will be able to say of her institution: we have a documented six-domain Maturity Model profile; we have identified our constraining domain; we have a specific programme of work to advance that domain by one stage; and the Maturity Model is now a standing item in our annual governance cycle. That is a meaningfully different governance position from the one most Caribbean boards occupy as of writing. It is achievable in two board cycles. It does not require external advisers, new technology, or additional committees. It requires the board’s deliberate attention.

Closing reflection — and what comes next

Eleven instruments have now been named across eleven articles. The comprehensive Maturity Model is the eleventh and final, and it is the instrument by which the prior ten are navigated. The series has, with this article, completed the substantive instrumentation it set out to provide. Whether a Caribbean board uses one of these eleven instruments, several, or all eleven, depends on the institution’s specific circumstances. The instruments are tools; the question they ultimately exist to answer is the one Article 12 will pose.

Article 12 is the close of the series. It will not introduce a twelfth instrument. It will instead offer the call to the Caribbean boardroom that the eleven instruments collectively support. The call is not a conclusion; it is a beginning. The work the series has equipped its readers to do is ahead of them, not behind them. We hope you will be with us for the close.

| FOR THE BOARD AGENDA

This article has unveiled the comprehensive D-AGENTICA™ Maturity Model — the structural instrument toward which the entire series has been building — and has set out the constraining-domain principle as the right reading discipline. A board chair, lead independent director, or risk committee chair reading this article has earned the right to ask their leadership team one specific question and to propose one specific decision that will materially improve the institution’s AI maturity over the next twelve months. THE QUESTION Within ninety days, can the company secretary, working with the chief executive, the chief risk officer, and the heads of the relevant functions, facilitate a one-day senior-leadership sitting at which the institution applies the D-AGENTICA™ Maturity Model honestly to itself — producing a six-domain profile, identifying the constraining domain, and drafting a twelve-month programme of work to advance that domain by one stage? THE DECISION That, by the end of the next quarter, the institution’s six-domain Maturity Model profile will be appended to the board’s terms of reference alongside the AI Governance Decision-Rights Matrix and the Sector AI Adoption Profile; that the constraining-domain advancement programme will be a quarterly board-reporting item; and that the Maturity Model will be reapplied annually as a standing item in the governance cycle. |

ABOUT THE AUTHOR

Dr. Dawkins Brown is the Executive Chairman and Founder of Dawgen Global. He holds a PhD and the MCMI and ACFE designations, with twenty-three-plus years of professional experience including a prior career at Ernst & Young before founding Dawgen Global. He writes the LinkedIn newsletter Caribbean Boardroom Perspectives and serves as Executive Chairman of Business Access Television.

About Dawgen Global

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements