Dawgen Decodes: The AI Governance Playbook

AI adoption across the Caribbean is accelerating—but the risk profile is accelerating faster. Many organisations are deploying AI through ad-hoc pilots, vendor demos, or isolated automation initiatives, without formal accountability, controls, documentation, or monitoring. That gap creates operational risk (bad decisions), regulatory exposure (data/privacy and governance expectations), reputational damage (unfair outcomes or security incidents), and financial loss (mispricing, churn, fraud leakage, and wasted investments).

The hard truth is this: pilot success does not equal enterprise readiness.

A chatbot that performs well for a month is not governance. A credit model that improves approvals is not assurance. A vendor’s presentation is not evidence.

This article provides a practical playbook for operationalising the Dawgen TRUST™ Framework—so Caribbean organisations can move from experimentation to scalable, auditable, and trustworthy AI.

You will learn how to:

-

build an AI register (what AI exists and where it is used),

-

tier AI use cases by impact,

-

assign ownership and decision rights,

-

embed controls for fairness, security, and compliance,

-

build audit-ready evidence packs,

-

implement monitoring and change management,

-

govern third-party AI vendors effectively.

The end goal is simple: AI that creates value without creating uncontrolled exposure.

1) Why “Pilot AI” Becomes “Enterprise Risk”

AI pilots are usually launched with good intent: improve customer experience, automate reporting, reduce fraud, speed up hiring, or enhance productivity. But pilots often fail to mature into governed systems because:

-

AI projects live inside IT or innovation teams, not operating functions.

-

There is no formal business owner accountable for outcomes and harm.

-

Data and privacy assessments are treated as paperwork, not design inputs.

-

Vendors are trusted without evidence.

-

Monitoring is not built—only go-live.

In many cases, the organisation thinks it is piloting “a tool.” In reality, it is piloting a decision system.

That distinction matters.

Decision systems require governance because they create real-world impacts:

-

a customer is denied credit,

-

a claim is delayed,

-

a job applicant is filtered out,

-

a suspicious transaction is blocked,

-

a customer is incorrectly served or profiled.

When AI impacts people or money, the organisation must be able to prove:

-

why the system was trusted,

-

how it was tested,

-

how it is monitored,

-

how it can be overridden,

-

how decisions are explained,

-

who is accountable.

That proof is governance.

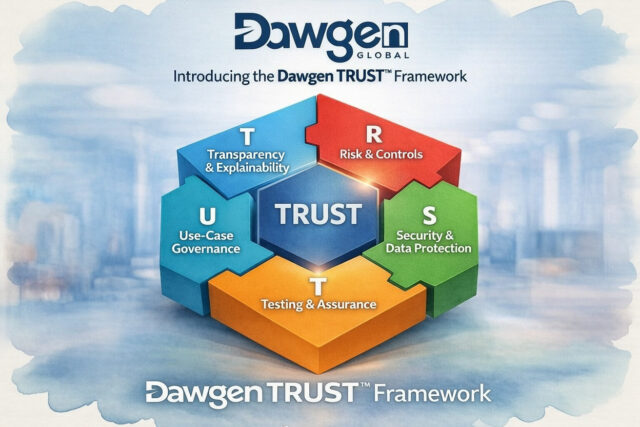

2) The Dawgen TRUST™ Framework as an Operating Model

Many organisations treat governance as a policy document. Dawgen Global treats it as an operating model.

Governed AI requires repeatable routines:

-

approvals,

-

controls,

-

monitoring,

-

evidence generation,

-

escalation and incident response,

-

periodic assurance.

This is why Dawgen TRUST™ is designed not as an academic model, but as a practical system:

T — Transparency & Explainability

R — Risk & Controls

U — Use-Case Governance

S — Security & Data Protection

T — Testing & Assurance

Article 1 introduced the framework. Article 2 shows how to deploy it.

3) Step One: Build an AI Register (What AI Exists?)

Most organisations cannot govern what they cannot see.

Your first move is to create a simple but structured AI Use-Case Register. It should include:

-

AI tool/system name (including “AI features” in existing platforms)

-

Business function (HR, Finance, Operations, Risk, CX, Marketing)

-

Use case description

-

Business owner (who benefits and is accountable)

-

Technical owner (IT / data / vendor manager)

-

Data involved (personal data? financial? sensitive?)

-

Decision impact (does it affect customers, employees, compliance?)

-

Vendor dependency (yes/no; which vendor)

-

Deployment status (pilot / live / paused)

-

Change frequency (how often is it updated?)

-

Monitoring in place? (yes/no)

-

Documentation available? (yes/no)

A practical Caribbean reality

AI registers often reveal “shadow AI”:

-

teams using public AI tools without approvals,

-

vendors providing AI scoring in the background,

-

staff using AI to generate client outputs,

-

AI features switched on in platforms without risk assessment.

It’s not about policing. It’s about visibility.

4) Step Two: Tier AI Use Cases by Impact

Not all AI requires the same governance intensity. The fastest way to govern efficiently is to apply tiering.

Tier 1: High-impact AI (Board visibility)

AI that impacts:

-

credit decisions,

-

claims decisions,

-

KYC/AML outcomes,

-

fraud blocks,

-

pricing decisions,

-

HR screening and promotion decisions,

-

regulatory compliance decisions,

-

large-scale public service outcomes.

Tier 1 requires the highest level of documentation, testing, monitoring, and oversight.

Tier 2: Material operational AI (Executive oversight)

Examples:

-

demand forecasting,

-

inventory optimisation,

-

customer segmentation and marketing optimisation,

-

call centre decision support.

Tier 2 still requires controls and monitoring but can be less intensive.

Tier 3: Low-impact AI (Managed by line leaders)

Examples:

-

internal productivity assistants,

-

summarisation tools,

-

drafting support,

-

basic classification with minimal sensitive data.

Tier 3 governance can be lightweight, with safe-use guidance and security controls.

Tiering allows innovation without paralysis.

5) Step Three: Assign Ownership (Who Is Accountable?)

The most common failure in AI governance is unclear ownership.

Every AI use case must have:

A) A Business Owner (Accountable for outcomes)

This is the executive who benefits from the AI and is accountable for:

-

use-case rationale,

-

performance targets,

-

customer impact,

-

escalation decisions,

-

ethical and fairness posture.

B) A Technical Owner (Accountable for system integrity)

Usually IT/data or vendor manager accountable for:

-

access control,

-

system configuration,

-

integration points,

-

data flows,

-

change releases.

C) A Risk/Compliance Owner (Accountable for defensibility)

Accountable for:

-

risk assessment,

-

control mapping,

-

evidence pack completeness,

-

regulatory readiness.

In smaller organisations, one person may play multiple roles. That’s fine—what matters is clarity.

6) Step Four: Build Controls That Actually Prevent Harm

Controls are not “extra work.” They are what make AI safe to scale.

For Tier 1 AI, minimum controls should include:

Human-in-the-loop controls

-

manual review thresholds,

-

override processes,

-

escalation paths for edge cases.

Segregation of duties

-

who can change the model vs who approves changes,

-

who can access sensitive training data.

Input validation and data quality checks

Bad data creates bad AI. Controls should ensure:

-

missing data handling,

-

anomalous data flags,

-

source reliability.

Decision logging

Every material AI decision should generate:

-

timestamp,

-

inputs summary,

-

outputs,

-

confidence score,

-

action taken,

-

human override (if any).

Customer recourse (where relevant)

If AI affects customers:

-

how do they challenge outcomes?

-

what is the review process?

-

how is fairness demonstrated?

7) Step Five: Design Transparency and Explainability That Works

Explainability does not mean exposing proprietary algorithms. It means being able to answer:

-

What factors influenced the decision?

-

What data was used?

-

What limitations exist?

-

What safeguards exist?

-

How can a decision be reviewed?

In practice, this requires two types of transparency:

Operational transparency (internal)

-

decision maps,

-

model documentation,

-

governance approvals,

-

testing results.

Stakeholder transparency (external)

-

plain-language explanations for customers,

-

HR decision transparency statements,

-

privacy notices updated for AI.

In the Caribbean, transparency is a competitive advantage. Customers and regulators respond positively when organisations demonstrate clarity and maturity.

8) Step Six: Testing and Assurance — Proving Trust with Evidence

Trust is not claimed. It is demonstrated.

Tier 1 testing should include:

-

performance testing (accuracy, false positives/negatives)

-

robustness testing (edge cases, unusual patterns)

-

bias testing (where people or fairness outcomes are involved)

-

security testing (prompt injection risks for GenAI, data leakage risks)

-

audit trail validation (evidence pack completeness)

The “AI Evidence Pack”

Every Tier 1 AI system should have a standard “audit-ready pack” containing:

-

AI register entry and tiering

-

use-case approval and business rationale

-

model documentation and limitations

-

data flow diagram and privacy assessment

-

control design and testing results

-

monitoring plan and threshold rules

-

change management log and version history

-

vendor assurance artefacts (if third-party)

-

incident response plan and escalation path

When this exists, audits become easier. Regulators become more confident. Partners trust faster.

9) Step Seven: Change Management and Monitoring (AI Is Never “Done”)

AI governance fails when organisations treat go-live as the finish line.

AI needs:

-

continuous monitoring,

-

periodic assurance,

-

change management governance.

Minimum monitoring metrics (Tier 1)

-

drift indicators (changes in inputs or behaviour)

-

error rate and exception rates

-

fairness metrics (if applicable)

-

complaint trends related to outcomes

-

vendor update logs and release notes

-

incident tracking and near-miss reporting

Change management rules

-

no model changes without approval

-

vendor updates must be reviewed

-

major changes require re-testing

-

documentation updated with each version

This turns AI into a controlled system—not a “moving risk.”

10) Governing Third-Party AI Vendors (The Overlooked Control)

Most Caribbean organisations rely on vendor AI. Governance must extend beyond your walls.

Your vendor governance checklist should include:

-

audit rights (or independent assurance reports)

-

incident notification timelines

-

update/change notification requirements

-

data retention and data ownership rules

-

bias/fairness commitments for high-impact systems

-

performance SLAs aligned to business outcomes

-

exit and portability provisions

Vendor AI should be treated like any other material outsourcing: contract + controls + monitoring + exit readiness.

Moving Forward: The Dawgen Global Advantage

Dawgen Global’s approach to AI governance is designed to be:

-

Audit-ready (evidence is built into design)

-

Practical (scaled governance through tiering)

-

Regionally relevant (Caribbean realities embedded)

-

Borderless (high-quality delivery at speed)

-

Outcome-oriented (trust accelerates value capture)

With Dawgen TRUST™, organisations can move beyond pilot experimentation into controlled, scalable adoption—without slowing innovation.

Next Step: Request a Proposal

If your organisation is adopting AI—through vendors, internal models, or AI-enabled platforms—now is the time to make governance real.

To request a proposal for Dawgen Global AI Governance & Assurance Services:

📩 Email: [email protected]

💬 WhatsApp Global: 15557959071

Send your industry, territories, and AI use cases (HR, fraud, credit, claims, CX, analytics). We will propose a structured governance, controls, and assurance roadmap aligned to your risk exposure and strategic goals.

About Dawgen Global

Dawgen Global is one of the top accounting and advisory firms in Jamaica and the Caribbean, offering integrated multidisciplinary services in audit, tax, advisory, risk assurance, cybersecurity, and digital transformation. Through our borderless, high-quality delivery methodology, we help organisations deploy AI responsibly—by embedding governance, controls, and audit-ready assurance that builds trust and protects value.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements