Dawgen Decodes: Cyber + AI

AI is rapidly becoming embedded in Caribbean organisations—through customer service chatbots, fraud analytics, compliance monitoring, workforce tools, marketing automation, and cloud platforms. But the moment AI touches data, decisions, and workflows, it also expands the organisation’s attack surface.

This is not just “cybersecurity with new buzzwords.” AI introduces new threat pathways, including:

-

prompt injection and data leakage through GenAI tools,

-

model manipulation and poisoning,

-

automated fraud and social engineering at scale,

-

deepfake and voice-clone attacks targeting payments and controls,

-

vendor supply-chain risks embedded in AI platforms,

-

accelerated exploitation because attackers can now automate reconnaissance and attack scripts using AI.

The result is a new leadership reality:

AI transformation without AI security is not transformation — it is exposure.

This article provides a practical Caribbean-ready view of:

-

what “Cyber + AI risk” really looks like,

-

the high-impact threat scenarios most organisations overlook,

-

the controls that actually reduce risk (not checklists),

-

how to integrate AI security into the Dawgen TRUST™ Framework,

-

how to build audit-ready evidence and board confidence.

1) Why Cyber Risk Changes When AI Enters the Business

Traditional cyber programs focus on protecting:

-

networks and endpoints,

-

identity and access,

-

data storage,

-

applications and infrastructure.

AI changes cyber risk in two ways:

1.1 AI increases the “decision surface”

AI does not only store or process data—it influences decisions and actions. Attackers don’t need to steal data to cause damage; they can mislead AI outputs, disrupt decision-making, or trigger harmful actions.

1.2 AI accelerates attackers

Attackers now use AI to:

-

generate realistic phishing content and scripts,

-

automate research and reconnaissance,

-

write malware or exploit code faster,

-

create deepfake audio/video for fraud,

-

scale social engineering across multiple targets.

AI is therefore both:

-

a business tool, and

-

a threat multiplier.

For Caribbean organisations—with leaner security teams and higher reputational sensitivity—this is a critical shift.

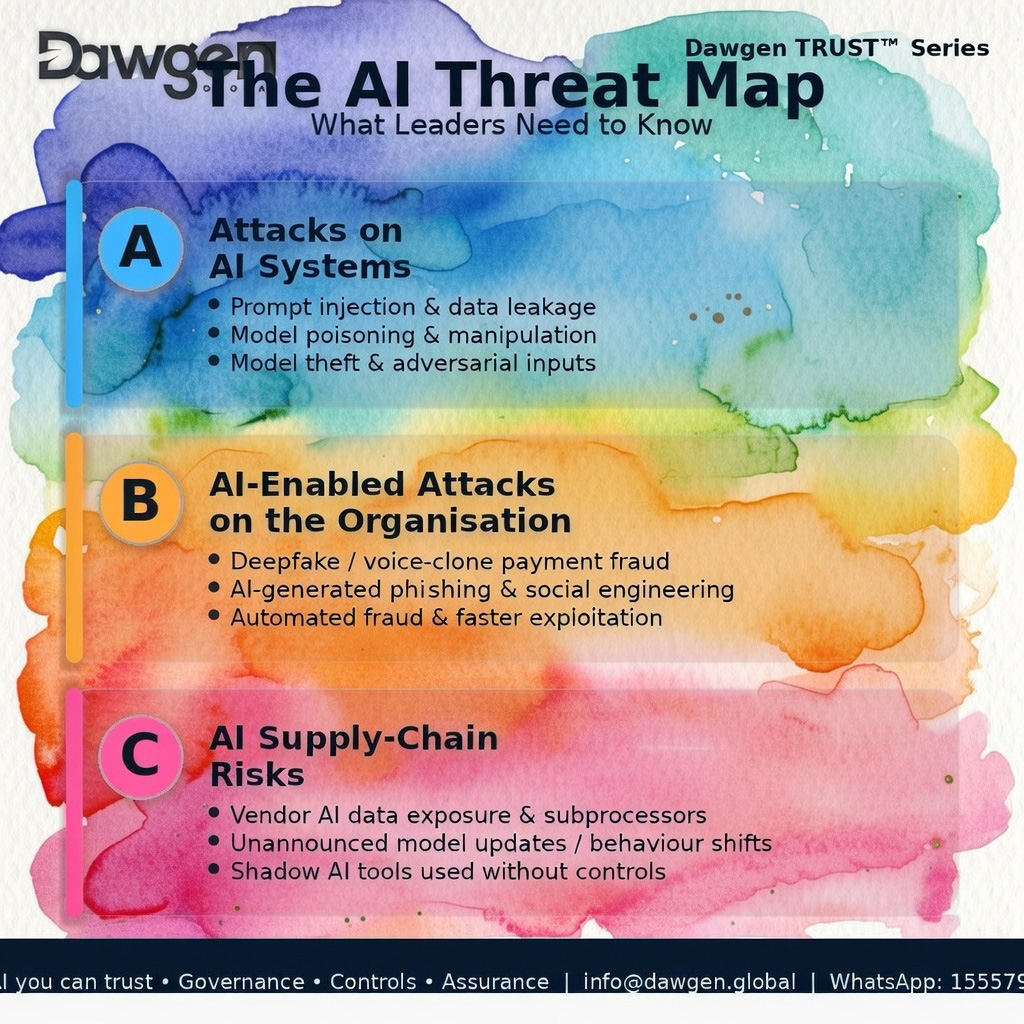

2) The AI Threat Map: What Leaders Need to Know

AI threats fall into three main categories:

Category A: Attacks on AI systems

-

Prompt injection (forcing an AI assistant/chatbot to reveal secrets or bypass rules)

-

Model poisoning (tainting training data or feedback loops)

-

Model theft (stealing proprietary models or prompts)

-

Adversarial manipulation (inputs crafted to trigger wrong outcomes)

Category B: AI-enabled attacks on the organisation

-

Deepfake CEO voice requesting urgent payments

-

AI-generated phishing with flawless language and local context

-

Automated credential stuffing and targeted password guessing

-

Synthetic identities and AI-enhanced fraud schemes

-

AI-written malware and “faster exploitation” cycles

Category C: AI supply-chain risks

-

Third-party AI tools with unclear data handling practices

-

Vendor updates changing behaviour without notice

-

Embedded AI features sending data to external model providers

-

Subprocessors and cross-border data transfers

-

Shadow AI: staff using public AI tools with sensitive data

These risks map directly to Dawgen TRUST™ (Security & Privacy plus governance, controls, evidence, monitoring).

3) High-Impact Attack Scenarios (Realistic for the Caribbean)

To manage cyber risk effectively, organisations must plan around scenarios, not generic fear.

Scenario 1: Deepfake payment fraud (executive impersonation)

An attacker uses voice cloning to impersonate a CEO, CFO, or director and requests an urgent payment, “confidential acquisition,” or vendor transfer.

Why it works:

-

trust-based culture,

-

urgency and authority bias,

-

weak payment verification controls.

Controls that matter:

-

strict payment approval workflows,

-

call-back procedures using known numbers,

-

“no payments via chat/voice alone” policy,

-

segregation of duties,

-

training on deepfake awareness.

Scenario 2: Prompt injection causes data leakage

A customer service chatbot or internal assistant is manipulated to reveal confidential info, system prompts, policies, or data from prior sessions.

Why it works:

-

unsafe prompt design,

-

lack of output filtering,

-

no data classification boundaries,

-

logs not reviewed.

Controls that matter:

-

prompt hardening and safety policies,

-

retrieval controls (what the AI can access),

-

output filtering (PII redaction),

-

session isolation,

-

logging and monitoring.

Scenario 3: AI-generated phishing that looks legitimate

Attackers craft perfect emails/messages tailored to Caribbean names, business context, and language style—then use it to steal credentials or request payments.

Controls that matter:

-

MFA enforcement,

-

anti-phishing training and simulations,

-

secure email gateway controls,

-

privileged access controls,

-

“verify before act” policies for financial actions.

Scenario 4: Vendor AI becomes a backdoor

A third-party AI tool integrated into your systems has weak security controls or shares data with subprocessors. A breach occurs at the vendor, or the vendor’s AI feature transmits sensitive data externally.

Controls that matter:

-

vendor AI due diligence and audit rights (Article 5),

-

data minimisation and tokenisation,

-

contract clauses for incident reporting and security posture,

-

monitoring and access restriction,

-

exit readiness and fallback plans.

Scenario 5: Model drift creates “silent security failure”

Fraud and anomaly detection systems degrade as criminals change tactics. False negatives rise. Losses increase quietly until financial impact becomes material.

Controls that matter:

-

drift monitoring,

-

KPI dashboards (false positives/negatives),

-

periodic revalidation,

-

threshold governance,

-

human review for edge cases.

4) Controls That Actually Matter (Not a Checklist)

The best controls are those that reduce risk in multiple scenarios.

Dawgen Global recommends controls across six critical domains:

4.1 Identity & Access Control (the #1 control domain)

AI does not replace identity risk. It amplifies it.

Must-have controls:

-

MFA across all privileged accounts

-

role-based access for AI systems and data

-

least-privilege access to model prompts, APIs, admin consoles

-

privileged access management for high-risk systems

-

quarterly access reviews for AI-related tools

Why it matters:

Most AI breaches begin with stolen credentials or excessive access.

4.2 Data Governance + AI Data Boundaries

The most common AI mistake: feeding sensitive data into tools without clear boundaries.

Must-have controls:

-

data classification policy applied to AI use cases

-

“what AI can see” rules (restricted datasets)

-

data minimisation (only what is needed)

-

tokenisation or masking where possible

-

strict retention rules for AI logs and conversation history

Why it matters:

If data doesn’t enter the AI system, it can’t leak.

4.3 Prompt Safety + Output Controls (for GenAI)

GenAI tools introduce unique risk because the interface is natural language.

Must-have controls:

-

prompt hardening (prevent jailbreak instructions)

-

retrieval gating (AI only accesses approved sources)

-

PII and sensitive data redaction in outputs

-

content filtering and safe completion rules

-

human approval for high-impact outputs

Why it matters:

Prompt injection attacks exploit weak design, not weak passwords.

4.4 Monitoring & Incident Response for AI Events

AI incidents require a slightly different playbook than traditional cyber incidents.

Must-have controls:

-

AI event logging (prompts, outputs, system actions, access events)

-

anomaly monitoring for AI tool usage and data calls

-

incident response playbook for AI misuse and leakage

-

tabletop simulations including deepfake and AI leakage scenarios

-

escalation paths to legal, compliance, and communications

Why it matters:

AI incidents can become reputational crises quickly—response speed is everything.

4.5 Third-Party and Supply Chain Controls

AI is often supplied. Supply-chain security is central.

Must-have controls:

-

vendor AI due diligence and tiering (Article 5)

-

contract clauses: audit rights, incident reporting, change notices

-

subprocessor transparency and data residency options

-

vendor breach notification and joint response coordination

-

exit plan and continuity procedures

Why it matters:

Your AI security posture is only as strong as the weakest vendor link.

4.6 Operational Controls (Human-in-the-Loop + Business Controls)

Many AI risks are reduced through strong business process controls.

Must-have controls:

-

payment verification policies and dual approvals

-

high-risk transaction flags with human review

-

override controls and logging for AI decisions

-

exception handling procedures

-

workforce training and awareness

Why it matters:

Some of the most damaging attacks exploit people and process—not code.

5) Integrating Cyber + AI Into the Dawgen TRUST™ Framework

Cyber + AI risk is not separate from governance—it is part of it.

Here’s how it maps:

-

Transparency: know where AI is used and what it can access

-

Risk & Controls: define harm scenarios and embed controls

-

Use Governance: approvals, ownership, prohibited uses, change governance

-

Security & Privacy: data boundaries, access controls, vendor security, incident response

-

Testing & Assurance: validate controls, test prompt safety, validate monitoring

This integration is what turns AI security from “IT’s responsibility” into enterprise confidence.

6) What an AI‑Security Evidence Pack Should Include

To be audit-ready and defensible, organisations should maintain an evidence pack for Tier 1 AI systems.

Minimum evidence pack contents:

-

AI use-case register entry and tier rating

-

data flow diagram and access controls mapping

-

security architecture summary for the AI system

-

logging configuration and monitoring metrics

-

prompt safety design (if GenAI) and test results

-

vendor security artefacts and contract clauses (incident reporting, audit rights)

-

incident response playbook and tabletop results

-

access review records and privileged account controls

When this exists, cyber conversations move from opinion to proof.

7) 30–60–90 Day Roadmap: AI Security Without Slowing Adoption

First 30 days: Visibility + Controls Baseline

-

build an AI inventory

-

classify Tier 1 AI systems

-

map data flows and access

-

enforce MFA and privileged access rules

-

implement “AI safe use” rules for staff

Days 31–60: Strengthen design and monitoring

-

prompt safety hardening (GenAI)

-

output filtering and redaction controls

-

logging and monitoring dashboards

-

vendor contract gap review and addenda plan

-

payment fraud verification procedures

Days 61–90: Test and operationalise

-

red-team / tabletop simulations (deepfake + leakage scenarios)

-

formalise incident response escalation and communications

-

roll out continuous monitoring cadence

-

finalise evidence packs for Tier 1 AI systems

-

quarterly assurance plan

This creates real maturity quickly and keeps innovation moving.

Moving Forward: The Dawgen Global Advantage

Dawgen Global helps Caribbean organisations secure AI systems with a practical, audit-ready approach. Our advantage is bringing together:

-

cybersecurity discipline,

-

risk and assurance capabilities,

-

governance and control design,

-

vendor assurance expertise,

-

regionally relevant implementation.

AI security is not about fear. It is about confidence.

When AI is secured, governed, tested, and monitored, it becomes a durable asset—not a threat multiplier.

Call to Action: Request a Proposal

If your organisation is adopting AI—and you want it to be secure, privacy-aligned, and audit-ready—Dawgen Global can help.

📩 Request a proposal: [email protected]

💬 WhatsApp Global: 15557959071

Share your sector, territories, and AI use cases (chatbots, fraud, credit, claims, HR, compliance monitoring, analytics). We will respond with an AI security and assurance roadmap aligned to your risk exposure and strategic goals.

About Dawgen Global

Dawgen Global is one of the top accounting and advisory firms in Jamaica and the Caribbean, offering integrated multidisciplinary services in audit, tax, advisory, risk assurance, cybersecurity, and digital transformation. Through our borderless, high-quality delivery methodology, we help organisations adopt AI responsibly—embedding governance, controls, and audit-ready assurance that builds trust and protects long-term value.

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements