| IN THIS ARTICLE

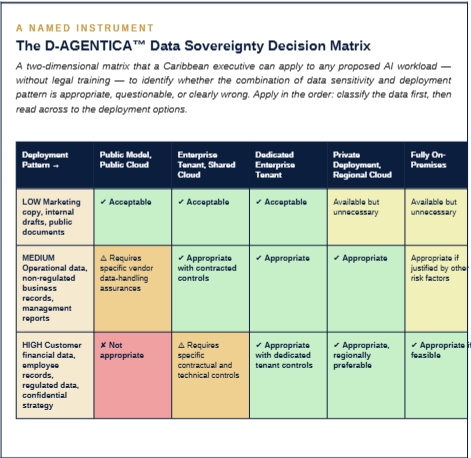

The fourth article of twelve. It opens Act II of the series — The Guardrails — by addressing the single most common objection every Caribbean board raises when AI is proposed: where does our data go? Articles 1, 2 and 3 established why Caribbean executives must engage with AI, what the technology actually is, and what it costs and returns at SME scale. Act II now turns to the question of how to adopt the technology responsibly in our region. This article takes the first and most important guardrail: data sovereignty. By the end of this article you will be able to: 1. Explain to your board, in language a non-specialist can follow, the five deployment patterns for AI workloads and how they differ on the specific dimension of where data physically resides and who can access it. 2. Classify any proposed AI workload against three sensitivity tiers, then apply the D-AGENTICA™ Data Sovereignty Decision Matrix to identify whether the proposed deployment is appropriate, questionable, or clearly wrong. 3. Recognise the four specific Caribbean regulatory considerations that most commonly shape the data sovereignty calculus for organisations operating in our region — and the posture Caribbean supervisors are signalling they will take as AI-specific regulation develops over the next thirty-six months. |

Early in 2026, a chief risk officer at a mid-sized Caribbean financial services institution telephoned me with what he described as a carefully prepared question. His executive team had approved the evaluation of an AI platform for customer service. The platform was well-reviewed, the vendor reputable, the business case strong. His board had asked him a single question before the pilot was authorised, and he did not feel he had given an adequate answer. The question was: where does our data go when this system is processing it, and who can see it?

‘I have read the vendor’s data handling documentation three times,’ he said to me. ‘I can tell you which data centre the servers are in. I can tell you the certifications the vendor holds. I cannot tell you, in a way my board chair can understand, whether our data is as protected as it needs to be, and I cannot tell you whether the answer we are giving our regulators if they ask us about this deployment in 2027 is going to survive scrutiny. I feel as though the whole AI conversation in our organisation is stuck on this question — and I do not know how to unstick it.’

That conversation is the specific conversation this article is going to help. In my experience, it is the single most common conversation standing between Caribbean organisations and their first serious AI deployment. More than any other objection, the data sovereignty question is where Caribbean boards pause, Caribbean compliance teams hesitate, and Caribbean executives either find a defensible path forward or defer the decision indefinitely.

Articles 1, 2 and 3 of this series established the foundations of the AI conversation. Article 1 argued that the current cycle matters and that the Caribbean’s usual lag pattern will not provide cover. Article 2 installed the vocabulary to distinguish genuinely agentic systems from chatbots marketed as such. Article 3 calibrated the economics for Caribbean SME scale and introduced the Sequencing Framework that the organisations getting this right are actually following. Those three articles closed Act I of the series — The Orientation.

Act II opens here. It addresses the question of how to adopt this technology responsibly in our region — the guardrails within which AI adoption must be designed to succeed in the Caribbean. There are two guardrails that matter most, and they correspond to Articles 4 and 5. This article addresses the first: data sovereignty. Article 5 will address the second: the regulatory landscape and where Caribbean supervisors are signaling they will take the oversight of AI over the coming thirty-six months.

I want to be direct about what this article is not. It is not a legal treatise on Caribbean data protection law. It does not try to catalogue every clause of Jamaica’s Data Protection Act 2020, or the comparable frameworks in the Bahamas, Cayman, Barbados, Trinidad and Tobago, or the other territories where our clients operate. An article of that kind would not serve the reader it is written for. It would require two hundred pages, lose its audience, and be outdated by the time a Caribbean supervisor issued its next guidance note.

What this article does instead is give a Caribbean executive the specific frameworks required to reason about data sovereignty in a disciplined way without becoming a lawyer. I will explain the five deployment patterns that actually exist in the AI market today — in terms of where data physically resides and who can access it. I will introduce the D-AGENTICA™ Data Sovereignty Decision Matrix as the named instrument for classifying any workload against those patterns. I will name the four Caribbean regulatory considerations that most commonly shape the calculus. And I will close with what I believe is a defensible posture for Caribbean boards to adopt now, pending the next eighteen months of regulatory clarification.

By the time you finish reading, you will be able to answer your own board’s data sovereignty question on any proposed AI workload — not perfectly, not as a general counsel would answer it, but well enough to distinguish a deployment that should proceed from one that should not, and to do so in language a non-executive director can follow.

The five deployment patterns, in plain language

The single source of confusion in Caribbean data sovereignty conversations is that the industry does not have settled plain-language terminology for how AI workloads are deployed. Vendors describe their offerings in proprietary terms that obscure the underlying pattern. Compliance teams read those descriptions and cannot map them to the controls they actually need to assess. I will fix this first, by installing a five-pattern vocabulary that covers the ways AI workloads are actually deployed in 2026.

Each pattern differs on two dimensions that matter: where your data physically resides while the AI is processing it, and who — humans and systems — can access that data. Once you know the five patterns and can tell them apart, every subsequent data sovereignty conversation becomes materially more precise.

Pattern one — public model, public cloud

Your staff member opens ChatGPT, Claude.ai, or Google Gemini from a web browser and types or pastes content into the conversation. The content is sent to the AI vendor’s public cloud infrastructure, typically in the United States or Europe. It may or may not be retained by the vendor for varying periods depending on the product tier. It may or may not be used to train future model versions depending on the terms of service the user has accepted. Most fundamentally, once the content leaves your organisation’s perimeter, you have limited contractual visibility into what happens to it. This is the deployment pattern most Caribbean organisations are already operating at, whether or not their leadership has formally authorised it — which is itself the shadow-AI problem I will return to in a moment.

Pattern two — enterprise tenant, shared cloud

Your organisation subscribes to an enterprise edition of the AI product — Microsoft 365 Copilot for Enterprise, Claude for Work, ChatGPT Team or Enterprise, Google Workspace Duet for Enterprise. Your data is processed on the vendor’s cloud but is logically isolated from other customers by contractual and technical controls. The enterprise contract typically includes specific commitments: your data is not used to train models, retention is configured to your requirements, audit logs are available. The physical location of processing is still the vendor’s public cloud, but the contractual posture is meaningfully stronger. This is where most Caribbean enterprise AI adoption should be, as a minimum, once it moves beyond individual experimentation.

Pattern three — dedicated enterprise tenant

A variant of Pattern 2 in which your organisation is assigned a dedicated compute environment within the vendor’s infrastructure. The data processing happens on hardware that is not shared with other customers at the processing layer, though the underlying physical infrastructure may still be the vendor’s. This is typically available at higher subscription tiers and is commonly purchased by regulated financial services institutions. The practical difference from Pattern 2 is narrower than the marketing suggests, but the posture is incrementally stronger and the audit trail is cleaner.

Pattern four — private deployment, regional cloud

The AI model is deployed within a cloud region that your organisation has specifically selected — a Microsoft Azure region in Canada or the United Kingdom, an AWS region in Sao Paulo, a Google Cloud region in Montreal. The data never leaves that region for processing. The vendor runs the model on your behalf in that specific geographic footprint, under specific contractual terms about data handling. This pattern matters in the Caribbean context because some territories, or some regulated workloads, prefer or require that customer data remain in specific jurisdictions. Caribbean organisations are increasingly selecting North American or European cloud regions for AI workloads when data sovereignty concerns are material, in the absence of commercial cloud infrastructure physically located in most Caribbean territories.

Pattern five — fully on-premises

The AI model runs on hardware physically located in your own data centre or a co-located facility under your direct control. Open-source models — Meta’s Llama family, Mistral, or smaller specialised models — can be deployed this way. The data never leaves your organisation’s direct control. This is the highest-assurance pattern and also the most operationally demanding. It requires your organisation to run the AI infrastructure itself, including model updates, security patching, and performance management. For most Caribbean organisations, this pattern is appropriate only for specific highly-sensitive workloads where the operational cost is justified by the sensitivity — and even then, frontier-grade agents such as those from Anthropic, OpenAI, or Google typically are not available in this deployment pattern, so the organisation accepts a capability trade-off in exchange for the sovereignty assurance.

| These are the five patterns. Every AI deployment in your organisation fits one of them. The question is whether the match between your data’s sensitivity and the chosen pattern is appropriate. |

Three observations about this pattern taxonomy matter for Caribbean organisations specifically.

First, most Caribbean organisations currently operate at Pattern 1 without formal authorisation. Staff use ChatGPT, Claude.ai, or other consumer AI products for work tasks, typing organisational data into those products over unsecured connections. This is the shadow-AI problem Article 1 named in the Three-Question Board Diagnostic. In my experience, this is a larger exposure for most Caribbean organisations than any specific AI deployment the board has formally authorised, because the unauthorised use is invisible to compliance and its scale is almost always larger than leadership assumes.

Second, the step from Pattern 1 to Pattern 2 is the single highest-leverage data sovereignty improvement most Caribbean organisations can make, and it is not expensive. Moving staff from consumer ChatGPT accounts to ChatGPT Team or from personal Claude accounts to Claude for Work typically costs US$25 to US$30 per user per month. For a fifty-person organisation that is approximately US$18,000 per year. The data sovereignty improvement is substantial — contractual commitments not to train on customer data, enterprise audit logs, administrative controls. No other fifteen-thousand-dollar line item in the average Caribbean organisation’s technology budget produces a comparable improvement in the governance posture.

Third, Patterns 4 and 5 are frequently over-prescribed in Caribbean data sovereignty conversations. In my experience, many Caribbean organisations conclude prematurely that they need on-premises deployment for AI when the actual sensitivity of the workloads they are considering would be appropriately served by Pattern 2 or Pattern 3. The over-prescription is expensive: Pattern 5 deployments cost materially more, require materially more operational capability, and often deliver a narrower capability envelope because the frontier models are not available in that pattern. A Caribbean organisation that chooses Pattern 5 for workloads that would be appropriately served by Pattern 2 has not improved its data sovereignty posture by any meaningful measure; it has simply paid more money to feel more protected.

| WHAT THE CARIBBEAN REGULATORY CONVERSATION ACTUALLY SAYS

In the supervisory conversations we participate in privately with Caribbean central banks and financial services commissions, the pattern I observe most consistently is this: Caribbean supervisors are not currently requiring Pattern 4 or Pattern 5 deployments for AI workloads, and are not signalling an intention to do so for most use cases in the near term. What they are signalling is a clear expectation that regulated institutions know which pattern they are operating in, can articulate why that pattern is appropriate for the data sensitivity involved, and can demonstrate appropriate contractual and technical controls. The supervisory question is almost always, in substance, ‘have you thought about this properly’ — not ‘have you deployed on-premises’. Caribbean organisations that can answer the first question defensibly will meet supervisory expectations for the foreseeable future. Organisations that cannot will face supervisory pressure regardless of which pattern they have chosen. |

A matrix for classifying any proposed workload

The pattern taxonomy is the vocabulary. The matrix is the tool. The D-AGENTICA™ Data Sovereignty Decision Matrix is built specifically to let a Caribbean executive, without legal training, classify any proposed AI workload against three sensitivity tiers and five deployment patterns — and see, at a glance, which combinations are appropriate, which require specific controls, and which should not proceed.

The sensitivity tiers are deliberately simple. Low sensitivity covers data that would be uncontroversial if it became public — marketing copy, internal drafts, public documents. Medium sensitivity covers business data that is not regulated but that the organisation would prefer to keep confidential — operational reports, internal correspondence, non-regulated business records. High sensitivity covers data that is either regulated or materially confidential — customer financial information, employee records, strategic plans, legally privileged communications. Most workloads fall clearly into one of these tiers once the classification is performed with discipline; the cases that are ambiguous are themselves a signal that the workload needs more careful thought before deployment.

Three principles for applying the matrix are worth naming directly.

Classify the data first, not the tool

The most common error I observe is organisations classifying the AI product and matching data to it, rather than classifying the data and matching the AI product to it. An organisation that says ‘we use Microsoft 365 Copilot so we are covered for all AI workloads’ has skipped the classification step. Copilot is Pattern 2 or Pattern 3 depending on configuration, which is appropriate for most workloads but not all. A high-sensitivity workload processed through Pattern 2 without specific additional controls is still a high-sensitivity workload being processed through Pattern 2. The deployment pattern does not classify itself up because it is being used for sensitive data. Classify the data first.

The vendor’s classification is not your classification

Every vendor will describe their product as suitable for enterprise use. Most vendors will claim their product meets the security and compliance requirements of regulated industries. These claims are usually accurate as far as they go — but they do not relieve your organisation of the obligation to do your own classification against your own specific data and your own specific regulatory environment. A vendor cannot know, from the outside, whether your customer financial data is appropriately processed on their infrastructure; only you can know that. Treat vendor claims as evidence, not as conclusions.

Amber is the most important colour

Green cells are easy — proceed. Red cells are easy — do not proceed. Amber cells are where judgment is required, and amber cells are where most Caribbean AI decisions actually live. An amber cell means the deployment can proceed, but only with specific contractual and technical controls. Caribbean organisations that treat amber as green — that proceed without the specific controls named — are accepting exposure that they may not have explicitly recognised. Caribbean organisations that treat amber as red — that refuse to proceed at all rather than negotiate the required controls — are forgoing value they could have captured. The discipline of amber is reading the cell carefully, identifying what specific controls are required, obtaining them in writing from the vendor, and proceeding only when they are in place. This is the specific work that separates a well-run AI governance programme from a theatrical one.

| A CARIBBEAN CLASSIFICATION PATTERN WE OBSERVE REPEATEDLY

Across eleven Caribbean financial services AI readiness reviews we have conducted in the past eighteen months, the single most common data sovereignty issue was not that organisations had selected inappropriate deployment patterns for their sensitive workloads. The most common issue was that organisations had not classified their data at all — neither the workloads already in production nor the workloads being considered. The deployment pattern was being chosen first, and the data sensitivity was being retrofitted to fit. Once we applied the matrix in its proper order — sensitivity first, pattern second — roughly a third of the existing deployments were confirmed as appropriate, roughly half were confirmed as appropriate but with specific controls that needed to be added, and the remainder required either scope change or migration to a different pattern. Not one of the eleven institutions had previously performed this classification with discipline. All eleven considered the exercise, in their post-review feedback, to have been materially valuable. |

Four Caribbean regulatory considerations that shape the calculus

The pattern taxonomy and the matrix work in any jurisdiction. The Caribbean context, however, adds four specific regulatory considerations that Caribbean organisations need to factor into their decisions. I want to name them directly so that a Caribbean executive can apply them consistently when the matrix is used.

Consideration one — the data protection frameworks are active

Jamaica’s Data Protection Act 2020 is now substantively in force. The Bahamas Data Protection Act 2003, as amended, is actively enforced by the Office of the Data Protection Commissioner. The Cayman Islands Data Protection Act 2017 has been operational since September 2019. Barbados, Trinidad and Tobago, Belize, and most other Caribbean territories either have data protection frameworks in place or are at advanced stages of implementation. These frameworks share most of their substantive structure with the European GDPR — consent, purpose limitation, data subject rights, cross-border transfer restrictions, breach notification — which means that a Caribbean organisation’s AI deployment must be analysed against specific domestic obligations, not against a general expectation of best practice. For any AI workload involving personal data of data subjects in a Caribbean jurisdiction, the domestic data protection framework of that jurisdiction is the primary legal anchor, and the AI deployment’s compliance with that framework is non-negotiable.

Consideration two — sectoral supervision overlays the general framework

For regulated sectors, the general data protection framework sits alongside specific supervisory expectations. The Bank of Jamaica and the Financial Services Commission in Jamaica; the Central Bank of Trinidad and Tobago; the Central Bank of Barbados; the Financial Services Authority of Saint Vincent and the Grenadines; the Cayman Islands Monetary Authority — each of these supervisors has issued or is developing guidance on technology risk management, operational resilience, and third-party dependencies that applies to AI deployments. Caribbean financial institutions must treat the domestic data protection law and the sectoral supervisory expectations as a combined framework, not as separate obligations. An AI deployment that satisfies the former but not the latter is not satisfactory; the same is true of the reverse.

Consideration three — cross-border transfer is the specific pressure point

The feature of Caribbean data protection frameworks that most directly affects AI deployment decisions is the provisions on cross-border data transfer. Data cannot, as a general rule, be transferred out of the jurisdiction to a country that does not provide an ‘adequate’ level of protection without specific lawful bases — standard contractual clauses, binding corporate rules, adequacy determinations, or specific consent. For AI workloads, this is the single most practically important constraint. Pattern 1 and Pattern 2 deployments almost always involve cross-border transfer, because the vendors’ infrastructure is typically located outside the Caribbean. The cross-border transfer can be lawful — most commonly through appropriately negotiated standard contractual clauses — but it is not automatic, and it must be specifically documented. This is the specific paperwork that distinguishes a Caribbean AI deployment that would survive a regulatory inspection from one that would not.

Consideration four — AI-specific rules are coming

Caribbean supervisors are watching the European AI Act (in force since 2024 with phased implementation continuing through 2027), the emerging United States federal and state frameworks, and the early regional signals from the Bank of England, the Federal Reserve, and the Monetary Authority of Singapore. The consensus I observe in the regional regulatory conversations I participate in privately is that meaningful AI-specific supervisory expectations will land in most major Caribbean jurisdictions within thirty-six months, most likely through guidance notes from sectoral supervisors in the first instance rather than through amendments to existing data protection statutes. Caribbean organisations that build governance maturity in advance of these rules will shape them; organisations that wait for them will inherit them. This is the specific dynamic I named in Article 1 as one of the four reasons the Caribbean’s normal lag pattern will not provide cover in this cycle.

| Caribbean supervisors are not requiring on-premises AI. They are requiring that you have thought about this properly and can prove it. |

A defensible posture for Caribbean boards today

Given everything above, what posture should a Caribbean board adopt now, in 2026, pending the next eighteen months of regulatory clarification? I want to propose five positions as a defensible baseline. I call them positions rather than recommendations because they are specifically intended to be decisions a Caribbean board can resolve, not observations leadership can debate indefinitely.

Position one — no Pattern 1 for organisational data

Your organisation should not permit staff to process organisational data through consumer AI products — free ChatGPT, Claude.ai, consumer Gemini, or equivalent — regardless of the data’s sensitivity. The exposure from unauthorised Pattern 1 use is almost always larger than leadership assumes, and the remediation is inexpensive: provide enterprise AI tools (Pattern 2) to the staff who need them, and communicate clearly that organisational data processing must occur within those tools. This single change closes most of the data sovereignty gap in most Caribbean organisations.

Position two — all AI tools in use are inventoried and sanctioned

Your organisation should maintain a written inventory of every AI tool in use, the pattern under which it operates, the data sensitivity permitted in each, and the contractual and technical controls in place. This inventory should be reviewed by the audit committee at least annually and refreshed whenever a new AI tool is introduced. The existence of this inventory is itself the single most persuasive answer a Caribbean organisation can give a supervisor who asks about AI governance. Its absence is the single most damaging signal the same organisation can give.

Position three — the matrix is applied for every new workload

Before any new AI workload is authorised, the D-AGENTICA™ Data Sovereignty Decision Matrix is applied by a named individual, the classification is documented, the deployment pattern is matched, any amber-cell controls are obtained in writing from the vendor, and the analysis is retained in the procurement file. This is not onerous — in most cases it is a one-page document — and it is the specific paperwork that will most usefully support any future regulatory inspection.

Position four — cross-border transfer is specifically documented

For any AI workload involving personal data transferred outside the Caribbean jurisdiction where the data subject resides, the lawful basis for that transfer is documented in writing — typically standard contractual clauses incorporated into the vendor agreement. Your general counsel or external legal adviser reviews these clauses before contract signature, not afterwards. This is the single most commonly missed element in Caribbean AI deployments and the single most specific regulatory exposure your organisation is likely to face.

Position five — the board reviews AI governance at least annually

Your organisation’s AI governance posture — the inventory, the classification practice, the controls in place — is a standing agenda item for the full board at least once a year, and for the audit committee at least semi-annually. This is not governance theatre. It is the specific mechanism through which your board discharges its fiduciary responsibility for AI adoption, and it is the mechanism that will be visible to a supervisor conducting an AI-specific review.

These five positions, adopted as a set, produce a Caribbean organisation whose AI governance posture will meet the supervisory expectations that are currently signalled in our region and the ones that are likely to emerge over the coming thirty-six months. They do not require your organisation to operate at Pattern 5. They do not require your organisation to refuse AI deployment until regulatory clarity emerges. They require your organisation to think about this properly, to document the thinking, and to revisit the thinking periodically. That is all supervisory expectations currently require, and it is all they are likely to require for the foreseeable future.

What this article has established, and what comes next

This article has done four things. It has installed a five-pattern taxonomy for how AI workloads are actually deployed, in plain language that a Caribbean executive can use. It has introduced the D-AGENTICA™ Data Sovereignty Decision Matrix as the specific tool for classifying any workload against data sensitivity and deployment pattern simultaneously. It has named the four Caribbean regulatory considerations that shape the calculus for organisations operating in our region. And it has proposed five defensible positions for Caribbean boards to adopt now, pending the next eighteen months of regulatory evolution.

Taken together with the instruments from the first three articles — the Three-Question Board Diagnostic, the Agentic Vendor Assessment, and the SME AI Sequencing Framework — the Data Sovereignty Decision Matrix introduced here gives a Caribbean organisation four proprietary diagnostic tools. The first measures where the organisation stands. The second distinguishes genuine capability from marketing. The third sequences the spend. The fourth classifies the workload. An organisation that applies all four disciplines in their proper place will get more right than it gets wrong on AI over the next twelve months, and will get it right in a way that stands up to supervisory scrutiny.

Next week’s article, Article 5, completes the guardrails conversation. Data sovereignty is the first guardrail; the regulatory landscape is the second. Article 5 will examine where Caribbean supervisors are signalling they will take AI oversight over the coming thirty-six months — what guidance notes are being drafted, which sectors will be addressed first, how the emerging rules will differ from the European AI Act, and what specifically a Caribbean organisation should be doing now to shape those rules rather than inherit them. The named instrument in that article will be the D-AGENTICA™ Caribbean Regulatory Readiness Self-Assessment — a ten-question diagnostic your audit committee can use to assess whether your organisation is positioned to meet the supervisory posture that is coming.

One reflection to carry into your next executive conversation. The Caribbean organisations that will win the data sovereignty conversation with their supervisors are not the organisations that have built the most technically sophisticated deployments. They are the organisations that can answer, clearly and quickly, five simple questions: what AI tools do we use, what data goes into each, under which deployment pattern, with which controls, and when was this last reviewed. If your organisation can answer those five questions today, you are in better shape than most of your peers. If you cannot, the matrix in this article gives you the scaffolding to be ready before you next need to answer them.

| FOR THE BOARD AGENDA

This article has specified a defensible posture for Caribbean data sovereignty on AI deployments. A board chair or audit committee chair reading this article has earned the right to ask their leadership team one specific question and to propose one specific decision that will materially improve the organisation’s governance posture over the next quarter. THE QUESTION Can management produce, within thirty days, a complete inventory of every AI tool currently in use in the organisation, the deployment pattern under which each operates, the data sensitivity permitted in each, and the contractual and technical controls in place — and can we demonstrate that the D-AGENTICA™ Data Sovereignty Decision Matrix has been formally applied to each? THE DECISION That the organisation will adopt the five defensible positions set out in this article as its baseline AI data sovereignty governance framework, will complete the AI tool inventory within thirty days, and will present the inventory together with the matrix classifications to the audit committee at its next regularly-scheduled meeting. |

| THE CARIBBEAN AI ADOPTION IMPERATIVE

A 12-Article Series from Dawgen Global NEXT IN THIS SERIES Article 05 — The Caribbean Regulatory Landscape What the next thirty-six months of AI supervision will look like in our region MEASURE YOUR ORGANISATION’S AI READINESS Request the free D-AGENTICA™ AI Maturity Self-Assessment!! Email us :[email protected] |

About Dawgen Global

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements