Dawgen TRUST™ Framework

Across the Caribbean, organisations are adopting AI faster than their governance models are evolving. Customer service chatbots, credit and fraud analytics, HR screening tools, marketing personalisation engines, and automated decision support are increasingly embedded into daily operations—often through third-party vendors and “AI features” inside existing software. This is creating a new category of enterprise risk: algorithmic risk.

AI risk is not just about cyber threats or privacy. It includes bias and unfair outcomes, opaque decision-making, regulatory exposure, model drift, vendor accountability gaps, and reputation damage—all of which can move quickly in small markets where trust and brand credibility are fragile.

The central message of this article is simple: Trust is now an economic asset. Organisations that can prove their AI is fair, secure, compliant, and defensible will win customers, attract partners, and secure financing faster than those who cannot. The good news is that AI governance does not have to be heavy or bureaucratic. With the right framework, organisations can innovate quickly while remaining audit-ready.

In this first article of our Dawgen TRUST™ series, we introduce a practical Caribbean-ready approach to AI governance and assurance. We outline the risks, the board-level questions that now matter, and the actionable steps organisations can take to move from ad-hoc AI adoption to trusted, controlled, and scalable AI.

1) AI Has Arrived in the Caribbean — Often Quietly

For many Caribbean organisations, AI adoption is not a single “AI project.” It happens quietly and gradually:

-

A bank uses machine learning to flag suspicious transactions.

-

An insurance company uses predictive models to assess risk and triage claims.

-

A telecom uses AI to predict churn and optimise promotions.

-

A retailer adopts AI-driven customer targeting and pricing recommendations.

-

A government agency deploys a chatbot for citizen enquiries.

-

A mid-market company rolls out an HR tool that ranks CVs automatically.

In many cases, the organisation did not “build AI.” It purchased it—through vendors, SaaS platforms, or cloud services. Yet the moment AI influences customer outcomes, financial outcomes, or employment outcomes, governance becomes unavoidable.

The shift is not just technological. It is governance-related:

When AI influences decisions, the organisation must be able to explain, justify, and evidence those decisions.

That expectation is becoming normal across global markets, and the Caribbean is not exempt.

2) Why Trust Is Now the Currency of Growth

In small markets, the value of trust is amplified. A failure spreads quickly and can linger for years.

Trust is not only “reputation.” It has measurable business consequences:

Trust affects customer behaviour

If customers believe decisions are unfair, intrusive, or inconsistent, they disengage—especially in sectors where trust is already fragile.

Trust affects regulatory posture

Regulators increasingly care about consumer fairness, transparency, and operational resilience, even when AI is not explicitly regulated.

Trust affects partnerships and ecosystems

International banks, insurers, payment networks, and multinational partners increasingly demand evidence that systems and controls are robust.

Trust affects funding

Lenders and investors are prioritising risk management maturity. Governance is a proxy for resilience.

So the question leaders must ask is not only “Can we adopt AI?” but:

“Can we adopt AI in a way that strengthens trust rather than weakening it?”

This is the strategic foundation of Dawgen TRUST™.

3) The Real Risk: AI Creates “Algorithmic Exposure”

Most organisations associate AI risk with cyber or privacy. Those are critical—but incomplete. Algorithmic risk is broader.

Here are the most common AI risks that affect Caribbean organisations today:

A) Bias and unfair outcomes

AI systems learn patterns from data. If historical data contains inequality, bias, or uneven representation, AI can reproduce and scale those outcomes.

In small markets, even perceived unfairness can become a public crisis.

B) Opacity and inability to explain decisions

If a customer asks “Why was my credit declined?” or an employee asks “Why was I not shortlisted?” AI-driven processes can be difficult to explain.

If you cannot explain a decision, you cannot defend it.

C) Model drift and degradation over time

AI performance changes as the world changes—new customer behaviour patterns, inflation, crime trends, fraud strategies, or workforce changes. Without monitoring, a model that was “good” at launch can become harmful later.

D) Vendor accountability gaps

Many organisations rely on third-party models, yet contracts often lack:

-

audit rights

-

clear incident reporting timelines

-

change control for model updates

-

documentation obligations

-

performance commitments tied to fairness and accuracy

This is dangerous. If a vendor’s AI causes harm, the customer blames you.

E) Privacy and data misuse risk

AI increases the volume and sensitivity of data processed—sometimes inadvertently. Chatbots can expose confidential data through prompts. Models can “remember” training examples. Systems can leak sensitive insights via outputs.

F) Governance failure (the hidden risk)

The biggest risk is often not the model—it is the absence of decision rights, ownership, controls, and evidence. When governance is unclear, no one is accountable.

4) Why the Caribbean Needs a Regionally Relevant Approach

Global frameworks are useful—but Caribbean realities require nuance.

Smaller data sets

Many AI vendors train models using large-market data. Caribbean customer behaviour, demographics, and patterns may differ. This can introduce accuracy and fairness issues.

Faster reputational impact

Small markets amplify feedback loops. A perceived injustice becomes public faster than in larger jurisdictions.

Multi-territory complexity

Many organisations operate across islands with varying regulatory expectations, infrastructure quality, and legal interpretations.

Lean internal capacity

Most mid-market Caribbean firms do not have model risk teams, AI ethics committees, and large compliance functions. Governance must be practical, not bureaucratic.

This is why Dawgen TRUST™ is designed to be audit-ready, lightweight, and implementable.

5) Introducing the Dawgen TRUST™ Framework

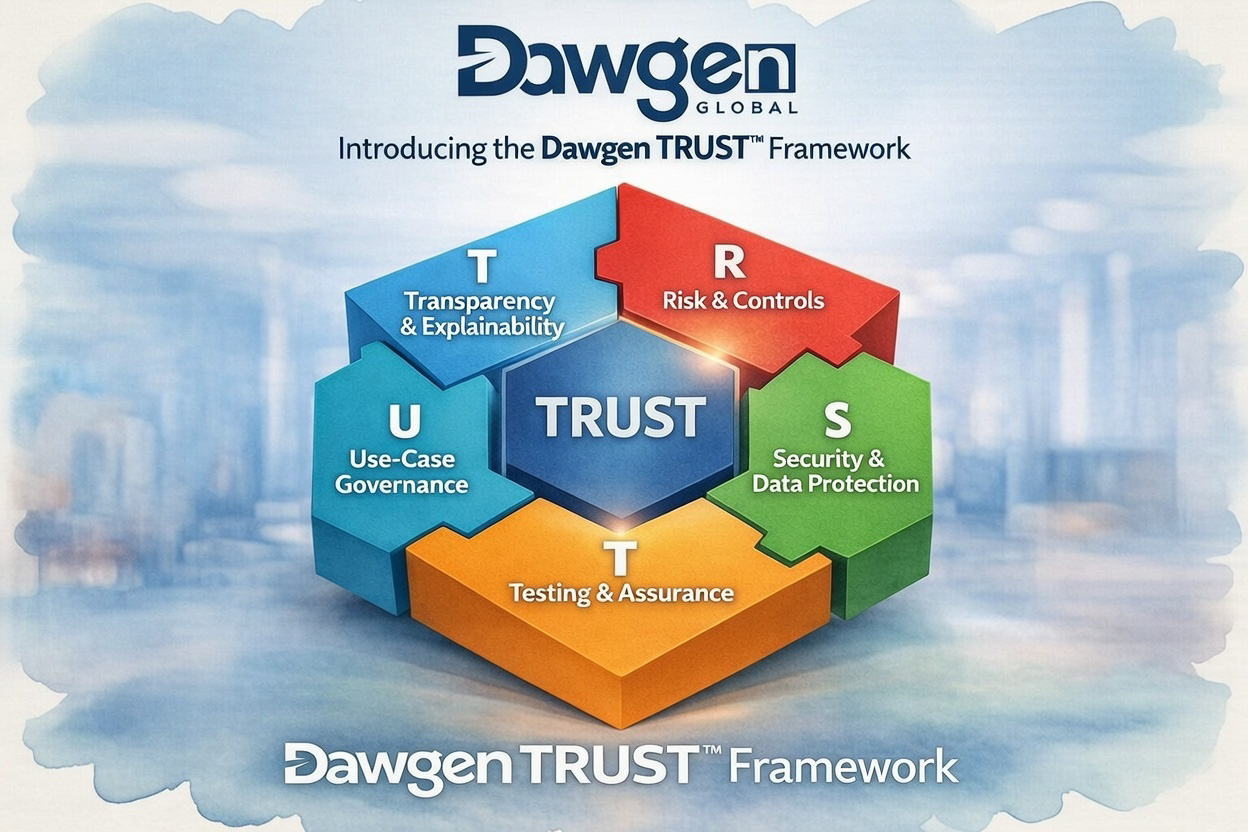

The Dawgen TRUST™ Framework is Dawgen Global’s methodology for building AI confidence and audit readiness. It is structured around five pillars:

T — Transparency & Explainability

Ensuring the organisation can explain:

-

what the AI does

-

how it is used

-

what data it relies on

-

what limitations it has

-

how humans intervene in decisions

Practical tools:

-

decision logs

-

explainability requirements for high-impact use cases

-

“plain language” decision narratives for customers and staff

R — Risk & Controls

Mapping AI use to enterprise risk and controls:

-

model risk assessment

-

control design (human-in-the-loop, overrides, escalation)

-

risk tiering (high/medium/low impact)

-

operational controls for drift and failures

U — Use-Case Governance

Defining decision rights, approvals, and accountability:

-

AI use-case register

-

governance ownership model (HR/IT/Risk/Legal)

-

policy and approval structure

-

prohibited use cases and red lines

S — Security & Data Protection

Ensuring AI adoption strengthens—not weakens—security:

-

privacy risk assessment

-

data handling rules

-

access control and monitoring

-

vendor and third-party assurance requirements

-

cyber alignment for AI systems

T — Testing & Assurance

Proving trust through evidence:

-

performance testing

-

bias and fairness tests where relevant

-

robustness checks

-

audit-ready documentation pack

-

ongoing monitoring and assurance reviews

The output is not just a report. It is an operating system for trusted AI.

6) What Boards and Executives Must Ask Right Now

Even if your organisation is not “AI-led,” your board should be asking governance questions because AI is already embedded in vendors and tools.

Here are practical questions every board and executive team should ask:

-

Where is AI currently used across the organisation?

-

Which AI use cases affect people, money, or compliance outcomes?

-

Who owns each use case—business, IT, risk, legal?

-

Can we explain AI-driven decisions to customers and employees?

-

What controls exist to prevent harm and detect drift?

-

What evidence can we produce today if a regulator, auditor, or partner asks?

-

Do our vendor contracts give us audit rights, change control, and incident visibility?

-

What is our incident response plan if AI causes harm or fails?

If leadership cannot answer these questions confidently, governance maturity must become a priority.

7) The “AI Governance Starter Kit” — Practical Actions in 30 Days

AI governance can begin quickly, without slowing innovation. Here is a practical starter plan:

Step 1: Build an AI Use-Case Register

List every AI-enabled tool or system, including vendor tools. Record:

-

purpose

-

owner

-

data used

-

decision impact

-

vendor (if any)

Step 2: Tier Your AI Use Cases

Classify use cases by impact:

-

Tier 1 (High impact): affects employment, credit, claims, compliance, large customer outcomes

-

Tier 2 (Medium): supports decisions with human review

-

Tier 3 (Low): productivity support, internal automation

Step 3: Define Minimum Controls by Tier

Tier 1 systems require:

-

explainability standards

-

fairness checks where relevant

-

human override mechanisms

-

monitoring and drift detection

-

documentation for audit-readiness

Step 4: Establish Governance Roles

Even a lean organisation can assign:

-

business owner

-

technical owner

-

risk/compliance owner

-

escalation authority

Step 5: Create an Evidence Pack

Collect and standardise:

-

policy statements

-

approvals

-

vendor documentation

-

logs

-

test outcomes

-

monitoring cadence

This is what makes governance real: evidence.

8) Moving Forward: The Dawgen Global Advantage

AI governance is not a barrier to innovation. It is the platform that makes innovation sustainable.

Dawgen Global’s advantage is that we combine:

-

assurance discipline

-

risk and compliance rigour

-

practical implementation capability

-

Caribbean operational knowledge

-

borderless delivery to maintain speed and depth

Through the Dawgen TRUST™ Framework, we help Caribbean organisations deploy AI that is:

-

trusted by customers

-

defensible to regulators and auditors

-

secure and privacy-aligned

-

fair and reputation-safe

-

ready to scale without fear

Next Step: Request a Proposal

If your organisation is deploying AI—or planning to—now is the time to ensure it is governed, controlled, and audit-ready.

To request a proposal for Dawgen Global AI Trust, Governance & Assurance Services:

📩 Email: [email protected]

💬 WhatsApp Global: 15557959071

Please include your industry, territory, and the key AI use case you are implementing (HR, credit, fraud, chatbots, analytics, compliance monitoring). We will respond with a structured approach, deliverables, and timeline aligned to your risk exposure and business goals.

Dawgen Global is one of the top accounting and advisory firms in Jamaica and the Caribbean, offering integrated multidisciplinary services in audit, tax, advisory, risk assurance, cybersecurity, and digital transformation. Through our borderless, high-quality delivery methodology, we help organisations adopt AI responsibly—by embedding governance, controls, and audit-ready assurance that builds trust and protects long-term value.

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements