By Dawgen Global — Borderless advisory and assurance for a world that runs on data and AI.

Trust is the real platform for AI. Without it, pilots stall, regulators circle, and customers hesitate. With it, organizations scale AI confidently—moving from experimental wins to durable advantage. DART™ (Dawgen AI Risk & Trust) is our branded control framework that translates abstract “AI governance” into everyday, auditable practice. It turns values into verifiable controls, and controls into measurable value.

This article unpacks DART™ in full: the seven pillars, the control objectives and sample controls, the accompanying maturity model, and how DART™ maps to leading reference points (e.g., management-system thinking, risk frameworks, and emerging regulatory expectations). We also provide a 120-day implementation plan, board-ready KPIs/KRIs, and artifacts you can copy today—so your teams can build trust by design, not by chance.

Why another framework?

Most organizations already manage security, privacy, quality, and audit. What’s different with AI is concentration of risk (one model influencing thousands of outcomes), opacity (why did the system do that?), and speed (new features land weekly). DART™ embraces what already works—policy discipline, internal controls, and continuous improvement—and adds what’s uniquely needed for AI: model documentation, evaluation, red-teaming, provenance, and ongoing monitoring.

DART™ is not a theoretical model. It’s a workbench. Every pillar contains clear objectives, concrete controls, and evidence you can show to your board, auditors, customers, and regulators.

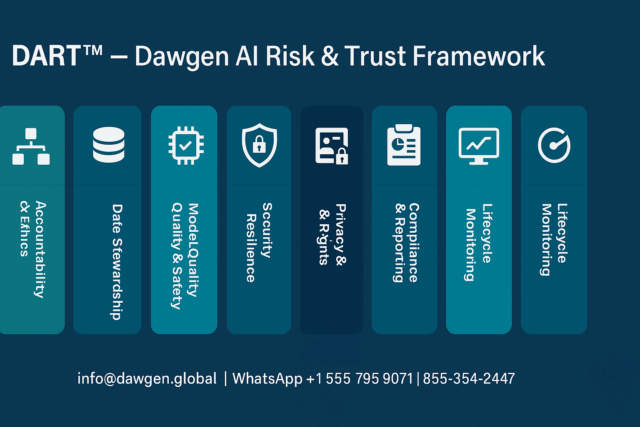

The DART™ pillars (what must be true)

-

Accountability & Ethics — Decisions have owners; humans remain meaningfully in control; outcomes align with organizational values and law.

-

Data Stewardship — Data is lawful, minimal, traceable, and properly retained/disposed; provenance is known and managed.

-

Model Quality & Safety — Models are fit for purpose, robust to abuse, and understandable to decision-makers.

-

Security & Resilience — Systems resist prompt injection, poisoning, leakage, and supply-chain compromise; they can fail safe.

-

Privacy & Rights — People know when AI is used; affected parties have routes to explanations, corrections, and redress.

-

Compliance & Reporting — There is an evidence trail; documentation is complete; roles (developer/deployer/vendor) are clear.

-

Lifecycle Monitoring — Outputs are watched for drift, bias, and misuse; incidents are learned from and controls are improved.

Each pillar contains control objectives (what we aim to achieve) and example controls (how we achieve it).

Pillar 1 — Accountability & Ethics

Objectives

-

Set tone at the top; define risk appetite for AI.

-

Ensure meaningful human oversight where harm is plausible.

-

Embed ethics (fairness, non-discrimination, safety) into design and decisions.

Example controls

-

GOV-01: Executive sponsor for AI; chartered AI Risk Committee meets monthly with defined quorum.

-

GOV-03: Risk tiering policy (Low/Medium/High/Critical) with escalating approval gates.

-

GOV-05: Human-in-the-loop (HITL) checkpoints for High/Critical use cases; refusal and escalation paths defined.

-

GOV-09: Ethics review checklist for high-impact decisions (e.g., credit, hiring, safety).

Evidence

-

Committee minutes; risk appetite statement; tiering matrix; HITL decision logs; ethics review forms.

Pillar 2 — Data Stewardship

Objectives

-

Lawful basis and contractual rights for all data used.

-

Data minimization and retention aligned to business, legal, and customer commitments.

-

Clear provenance for training, fine-tuning, and augmentation data.

-

IP hygiene for inputs and outputs.

Example controls

-

DATA-02: “Do-not-paste” registry + DLP controls for secrets, PII, and regulated data.

-

DATA-04: Provenance and source attestation for training and generated content (incl. watermarking/provenance where feasible).

-

DATA-07: Data retention schedules for datasets, prompts, logs, and eval artifacts; automated purges.

-

DATA-11: Third-party dataset due diligence (licensing, consent, jurisdiction, sensitivity).

Evidence

-

Data maps/lineage; consent/contract references; DLP dashboards; retention configurations; provenance attestations.

Pillar 3 — Model Quality & Safety

Objectives

-

Models are validated for purpose; risks and limitations are documented.

-

Harmful behavior is minimized through testing, evaluation, and design mitigations.

-

Changes are controlled; releases are gated by test outcomes.

Example controls

-

MOD-03: Model Cards (purpose, data, metrics, limitations, owners, review dates).

-

MOD-06: Evaluation harness with acceptance thresholds for quality, robustness, and safety.

-

MOD-08: Bias and fairness tests pre-deployment and on a cadence.

-

MOD-10: Adversarial & jailbreak red-teaming before go-live and after material changes.

-

MOD-12: Change management (versioning, rollback plans, release approvals).

Evidence

-

Model Cards; evaluation reports; bias and robustness test logs; red-team findings and fixes; release records.

Pillar 4 — Security & Resilience

Objectives

-

Protect models, data, prompts, and outputs against misuse and exfiltration.

-

Manage supply-chain risk across providers, plugins, and dependencies.

-

Operate safely under stress; fail safe, not catastrophically.

Example controls

-

SEC-01: Secrets scanning in developer workflows; token vaulting; zero secrets in prompts.

-

SEC-06: Prompt/response filtering; egress controls for tokens and PII; domain allow/deny lists.

-

SEC-07: Model supply-chain due diligence (dependencies, model weights, plugin permissions).

-

SEC-09: Backup/restore for models and configs; kill-switch/rollback rehearsals quarterly.

-

SEC-12: Continuous vulnerability management for AI stacks.

Evidence

-

Scanner reports; WAF/DLP/prompt-filter logs; dependency SBOMs; rollback rehearsal outputs; incident post-mortems.

Pillar 5 — Privacy & Rights

Objectives

-

Conduct AI Impact Assessments (AIIA/DPIA) where required.

-

Provide transparency notices and route to human escalation/contestability.

-

Enable data subject rights (access, correction, erasure) within defined SLAs.

Example controls

-

PRV-03: DPIA/AIIA template and register for Medium+ risks.

-

PRV-06: Customer-facing transparency notices; model-use disclosures where appropriate.

-

PRV-08: Rights handling workflow covering training, inference, and logs.

-

PRV-11: Pseudonymization/aggregation where data is not strictly necessary.

Evidence

-

DPIA/AIIA records; public notices; rights request logs; engineering notes for pseudonymization.

Pillar 6 — Compliance & Reporting

Objectives

-

Maintain an AI Evidence Pack; map controls to external expectations.

-

Clarify responsibilities when you are the provider vs. deployer of AI systems.

-

Enable assurance (internal/external) without heroic effort.

Example controls

-

CMP-02: Evidence Pack index (Asset Register, Model Cards, tests, DPIAs, vendor artifacts, training attestations, logs).

-

CMP-03: Responsibility matrix for provider/deployer roles; contractual clauses to align vendors.

-

CMP-05: Cross-walks to your chosen external references (e.g., management systems, risk frameworks).

-

CMP-08: Quarterly governance report to the board with KRIs/KPIs and incidents.

Evidence

-

Evidence repository; cross-walk matrices; contracts/SOWs; board packs.

Pillar 7 — Lifecycle Monitoring

Objectives

-

Detect performance/bias drift, misuse, and security anomalies.

-

Respond to incidents quickly; learn and improve.

-

Keep models aligned to changing business and regulatory realities.

Example controls

-

MON-01: Drift and bias thresholds with alerts and auto-rollback triggers.

-

MON-02: Always-on logging for prompts/outputs on sanctioned platforms.

-

MON-04: Incident playbooks (data leak, harmful output, IP claim, vendor breach).

-

MON-05: Quarterly posture review and training refreshers; trend KPIs analyzed.

Evidence

-

Monitoring dashboards; alert tickets; incident logs; quarterly reviews and action trackers.

The DART™ maturity model (Level 0–5)

-

L0 Ad-hoc — No inventory; scattered experimentation; no evidence trail.

-

L1 Aware — Interim AUP; partial inventory; basic guardrails; little testing.

-

L2 Basic — Policy spine in place; risk tiering; Model Cards for key uses; some evals.

-

L3 Managed — Red-teaming; DPIAs for Medium+; Evidence Pack; vendor clauses; board reporting.

-

L4 Measured — Monitoring telemetry; rollback drills; KPIs/KRIs tracked; periodic internal audit.

-

L5 Optimized — Continuous improvement; automation of evals; external assurance/readiness letters; culture embedded.

Use maturity levels per pillar, not only overall. A heat map lets leaders prioritize.

Implementing DART™ in 120 days (four sprints)

Sprint 1 (Days 0–30): Inventory & Guardrails

-

AI use census; Asset Register; risk heat map.

-

AUP v1; Risk Tiering Guide; AI Review Desk with fast exceptions.

-

Basic technical guardrails: DLP, allow/deny lists, prompt logging on sanctioned tools.

Milestones: Asset coverage ≥70%; AUP attestation ≥80%; High/Critical uses behind guardrails.

Sprint 2 (Days 31–60): Documentation & Testing

-

Model Cards for 3 priority use cases.

-

Evaluation harness and acceptance thresholds; first bias/robustness tests.

-

Vendor re-papering wave 1 (top AI suppliers); transparency & IP standards.

Milestones: Eval reports for priority uses; vendor contracts updated/in negotiation.

Sprint 3 (Days 61–90): Assurance & Monitoring

-

Red-teaming on priority uses; fixes tracked.

-

Evidence Pack assembled; AI Control Dashboard live (coverage, tests, incidents).

-

DPIA/AIIA register operational; first quarterly governance report.

Milestones: First board pack; incident drill + rollback rehearsal complete.

Sprint 4 (Days 91–120): Scale & Optimize

-

Extend Model Cards and testing to additional uses; automation of evals.

-

Internal audit review; cross-walk to external references complete.

-

Decide on readiness letter or external certification path.

Milestones: Maturity heat map improved by ≥1 level in 4+ pillars; roadmap approved.

KPIs & KRIs to manage trust like a product

Coverage & discipline

-

% AI uses in Asset Register (target: >90% by Day 120)

-

% Medium+ uses with Model Cards (target: >85%)

-

AUP training/attestation rate (target: >95%)

Testing & release hygiene

-

% High/Critical uses with bias/robustness/security tests pre-deployment

-

Mean time to remediate Critical findings

-

% models with executed red-team in the last quarter

Events & resilience

-

AI-related incidents/near misses (volume & severity)

-

MTTD/MTTC for AI incidents

-

Rollback rehearsal success rate

Compliance & assurance

-

Evidence Pack completeness score per use case

-

Vendor due-diligence coverage (top N suppliers)

-

Audit issues opened/closed; overdue actions

Value realization

-

Hours saved or quality uplift per use case

-

% AI initiatives meeting or beating benefit forecasts

-

Cost avoided from incidents/regulatory findings

Artifacts you can copy today

-

DART™ Control Library (starter set)

-

28 controls spanning GOV, DATA, MOD, SEC, PRV, CMP, MON with control owners, frequency, and evidence pointers.

-

-

Model Card template

-

One page + annexes: purpose, data sources, metrics, limits, red-team summary, monitoring plan, owners.

-

-

AI Impact Assessment (AIIA/DPIA)

-

Short form (10 questions) + long form (25 questions) for higher risk.

-

-

Red-team playbook (condensed)

-

Threat categories, test prompts, safe-completion criteria, defect workflow.

-

-

Board pack template

-

One-page narrative + KPI dashboard visuals + decision asks.

-

Ask and I’ll deliver these in Word/Google Docs with Dawgen branding.

How DART™ fits with what you already do

-

Risk management & internal control: DART™ plugs into your existing risk and control registers; many controls map 1:1 to existing processes (e.g., change control, vendor risk).

-

Security & privacy tooling: Use today’s DLP, SIEM, code scanners, vaults, and identity platforms; DART™ simply tunes them for AI.

-

Audit & assurance: The Evidence Pack is formatted so internal audit can test design and operating effectiveness without reinventing methods.

-

Regulatory readiness: The provider/deployer matrix, documentation sets, and monitoring expectations align naturally with emerging AI rulebooks—so you can demonstrate diligence even before formal obligations hit.

Common pitfalls DART™ prevents

-

Policy without practice: DART™ insists on artifacts (Model Cards, tests, logs) and operating gates—not just intentions.

-

“One size fits all” governance: Risk tiering focuses rigor where it matters most.

-

Tool-first thinking: Controls and evidence come before shiny platforms; you can automate later.

-

Documentation after the fact: Model Cards and test plans start with design, not post-mortems.

-

Monitoring gaps: Drift, bias, and misuse are treated as operational signals with thresholds and rollback, not academic concerns.

Case vignette (composite)

A multinational services firm wanted enterprise AI search and drafting, plus analytics copilots for finance. They adopted DART™:

-

In 45 days, they stood up AUP, tiering, and sanctioned tools with prompt logging.

-

Model Cards and evaluation harnesses were built for two critical use cases; the first red-team uncovered prompt-injection paths and a data-leakage edge case—fixed pre-launch.

-

By Day 120, they had an Evidence Pack, a live AI Control Dashboard, and a board report showing 30% cycle-time reduction in service responses and zero material incidents.

-

Procurement re-papered top suppliers with IP warranties and documentation obligations; legal standardized transparency notices.

Leadership approved expansion and requested an external readiness letter—based on the same DART™ evidence.

Frequently asked questions

Is DART™ only for big enterprises?

No. The controls scale down. A 100-person organization can adopt a streamlined DART™ set in 60–90 days.

Do we need new tools?

Start with what you have—DLP, SIEM, code scanners, identity, ticketing. Add targeted evaluation or red-team tools only where necessary.

Will this slow down innovation?

The opposite—when release criteria are clear and repeatable, launches accelerate and rework declines.

How does DART™ handle general-purpose vs. bespoke models?

Risk tiering applies to both. For general-purpose models, DART™ emphasizes vendor documentation, usage constraints, and monitoring. For bespoke models, it adds deeper evaluation, lineage, and training data controls.

Conclusion: design trust on purpose

Trust is not a press release; it is a system of behaviors. DART™ turns those behaviors into controls and evidence your teams can live with—and your board can rely on. Adopt the pillars, run the sprints, publish the KPIs, and you’ll move from AI experiments to repeatable, auditable value.

Next Step!

At Dawgen Global, we help you make smarter, more effective decisions—borderless and on-demand. If you’re ready to implement DART™ and stand up an AI trust program in 120 days, let’s design your path.

📧 [email protected] · WhatsApp +1 555 795 9071

© Dawgen Global. Dawgen AI Assurance™ and DART™ are trademarks of Dawgen Global.

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

✉️ Email: [email protected] 🌐 Visit: Dawgen Global Website

📞 📱 WhatsApp Global Number : +1 555-795-9071

📞 Caribbean Office: +1876-6655926 / 876-9293670/876-9265210 📲 WhatsApp Global: +1 5557959071

📞 USA Office: 855-354-2447

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements