Borderless Assurance: Internal Audit That Moves at the Speed of Risk

Powered by Dawgen Global’s IAVANTAGE™ Framework

Executive Summary

-

Most organisations say they want “data-driven Internal Audit,” but many struggle to implement analytics in a way that is repeatable, scalable, and trusted. Analytics often becomes a pilot, a one-off dashboard, or a specialist-only capability that never integrates into everyday audit delivery.

-

The problem is not a lack of tools. The problem is a lack of audit routines: standard tests, clear data onboarding, exception workflows, governance, and reporting discipline.

-

“Analytics that stick” is analytics that behaves like methodology—embedded into planning, fieldwork, and reporting—supported by clear ownership and quality controls.

-

Dawgen Global’s IAVANTAGE™ Framework provides the structure to make analytics durable:

-

Insight turns data into usable intelligence,

-

Assurance Quality ensures repeatability and audit-grade evidence,

-

Technology & Innovation provides the digital layer that scales, and

-

Value Creation proves impact beyond “interesting findings.”

-

-

Dawgen’s Digital, Borderless delivery model accelerates analytics adoption through reusable test libraries, secure evidence workflows, and specialist pods—making this achievable for Caribbean organisations without building large internal analytics teams.

1) Why analytics is now a core internal audit capability—not a “nice to have”

Internal Audit is being asked to do more with less, in a world where risk moves faster and data volumes grow exponentially. Boards expect:

-

faster assurance

-

broader coverage

-

fewer surprises

-

clearer insight

-

better forecasting of emerging risks

Traditional sampling-heavy audits struggle in this environment. Analytics changes the equation by allowing Internal Audit to:

-

test larger portions of transactions (sometimes 100%)

-

detect anomalies that sampling misses

-

focus fieldwork on exceptions

-

identify trends and control deterioration early

-

run continuous monitoring in key risk areas

In short, analytics enables Internal Audit to move at the speed of risk.

But many organisations still fail to make analytics stick.

2) Why analytics efforts fail: the “pilot trap”

Across organisations, the same pattern repeats:

-

A new analytics tool is acquired (or Excel macros improve).

-

A small pilot runs—often led by one person.

-

The pilot produces interesting insights.

-

The pilot is not repeated because data access, time, and ownership are unclear.

-

The capability fades until the next “innovation push.”

This is the pilot trap.

Analytics does not stick because it is treated as:

-

a project

-

a personal skill

-

a one-time report

-

a separate “analytics lane”

Instead, analytics must become:

-

a routine

-

a library of tests

-

an audit workflow

-

a governed capability

The goal is not “more analytics.” The goal is repeatable audit routines powered by analytics.

3) The “Analytics That Stick” model: five building blocks

To make analytics durable, Internal Audit needs five building blocks.

Building Block 1 — A focused audit analytics strategy (not broad ambition)

Start with 3–5 high-value areas where analytics produces immediate impact, such as:

-

Accounts Payable (duplicate payments, vendor anomalies)

-

Payroll (ghost employees, overtime anomalies)

-

Revenue / billing (credit notes, discounts, overrides)

-

Inventory (shrinkage patterns, adjustments)

-

Procurement (split purchases, approval bypass patterns)

Analytics sticks when it starts where value is obvious.

Building Block 2 — A data onboarding protocol (the hidden success factor)

Analytics fails when data access is messy. You need a simple protocol:

-

who requests data

-

from which systems

-

in which format

-

how often

-

data definitions (fields, timestamps, entity codes)

-

validation checks (completeness, duplicates, outliers)

If data onboarding isn’t defined, every analytics test becomes “custom work” again.

Building Block 3 — A repeatable test library (analytics as methodology)

A test library is a set of standard routines, for example:

-

AP: duplicates, split invoices, weekend payments, unusual vendors

-

Payroll: bank account duplicates, unusual overtime, rate overrides

-

Revenue: refunds patterns, credit note spikes, pricing overrides

-

Procurement: supplier concentration, PO threshold splitting, approvals

-

Inventory: adjustments trends, write-offs, negative stock movements

Each test should have:

-

purpose

-

required data fields

-

steps and logic

-

exception thresholds

-

evidence outputs

-

interpretation guidance

A library makes analytics repeatable, transferable, and scalable.

Building Block 4 — An exception workflow (the bridge from data to action)

Analytics that produces exceptions without a workflow creates frustration.

A clean workflow answers:

-

who reviews exceptions first

-

which exceptions need investigation

-

who investigates (management or audit)

-

what evidence closes an exception

-

how outcomes are documented

-

how repeat exceptions trigger deeper audits

Without an exception workflow, analytics becomes noise.

Building Block 5 — Quality and governance (so results are trusted)

Analytics must be audit-grade:

-

version control of scripts

-

validation of logic

-

review and approval gates

-

reproducibility

-

documentation of assumptions

-

secure handling of sensitive data

If the Board cannot trust the evidence, analytics becomes “interesting,” not “assurance.”

4) How IAVANTAGE™ makes analytics stick (pillar-by-pillar)

Insight: turn data into intelligence

IAVANTAGE™ treats analytics as an insight engine, not a reporting feature. The goal is to convert raw data into:

-

risk signals

-

root-cause hypotheses

-

control effectiveness indications

-

targeted assurance priorities

Assurance Quality: make analytics reproducible

Analytics becomes durable when it is embedded into methodology:

-

standard workpapers and evidence

-

testing scripts reviewed like audit programs

-

audit trail preserved for governance and regulators

Technology & Innovation: the digital layer that scales

Your IAVANTAGE™ Digital Layer is the operating backbone:

-

audit management workflow

-

secure evidence portal

-

analytics scripts/test library

-

dashboards and reporting packs

-

continuous monitoring candidates

Alignment: test what matters to strategy and risk appetite

Analytics efforts should prioritise what threatens strategic outcomes:

-

cash leakage

-

fraud exposure

-

service disruption

-

regulatory failures

-

transformation risks

-

third-party failures

Value Creation: prove impact, not activity

Analytics value should be measured in outcomes such as:

-

funds recovered / leakage prevented

-

process cycle time reduced

-

control failures detected earlier

-

risk events prevented or reduced

-

remediation accelerated

-

improved decision confidence

This is what makes Boards sponsor analytics maturity.

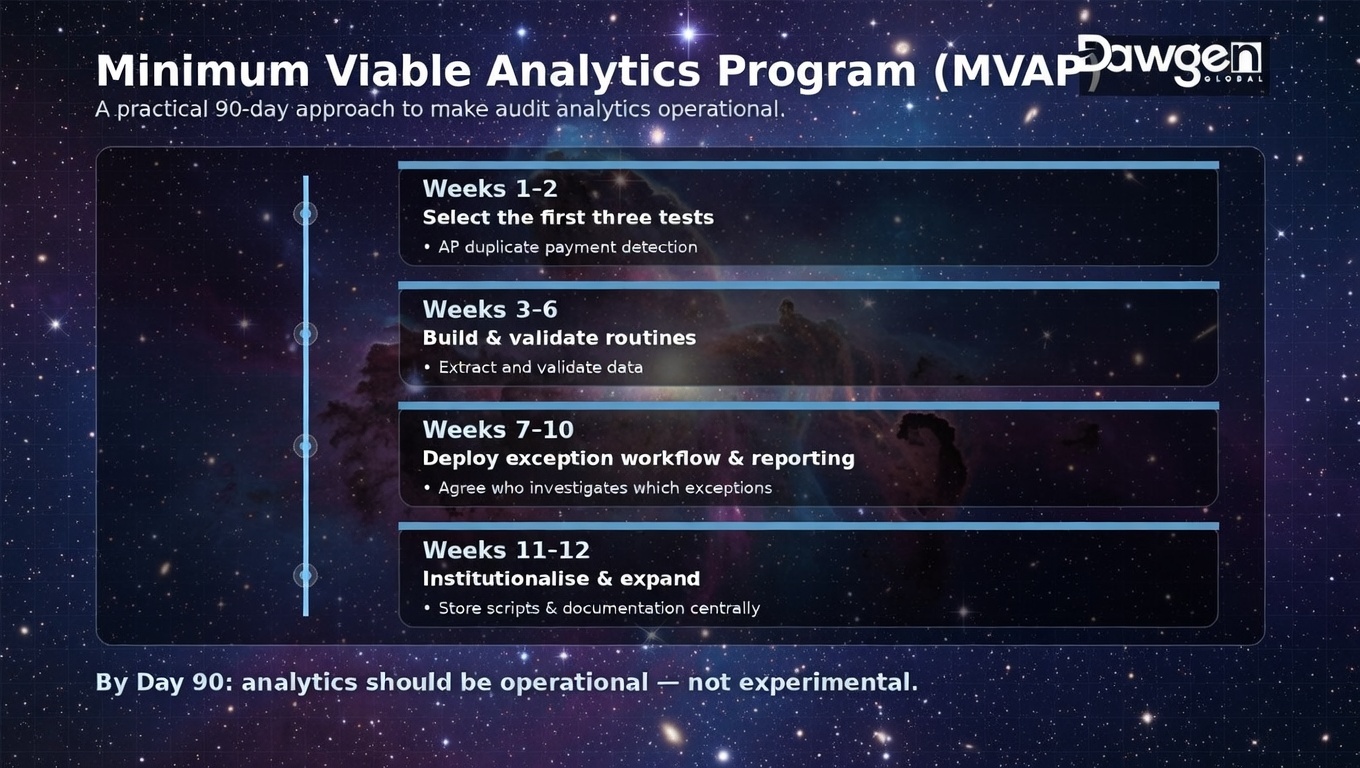

5) The minimum viable analytics program (MVAP): a practical 90-day approach

For most Caribbean organisations, success comes from a minimum viable program that produces results quickly and builds momentum.

Weeks 1–2: Select the first three tests

Pick three tests with high value and accessible data. Example:

-

AP duplicate payment detection

-

Payroll anomaly scan

-

Revenue credit note pattern scan

Define:

-

data owner

-

extraction method

-

definitions

-

exception thresholds

-

reporting format

Weeks 3–6: Build and validate the routines

-

extract and validate data

-

run routines

-

validate exceptions (false positives vs real issues)

-

document logic and evidence

-

create repeatable scripts

Weeks 7–10: Deploy exception workflow and reporting

-

agree who investigates which exceptions

-

document closure evidence

-

create a simple dashboard: exceptions by type, value, risk rating, status

-

provide management action tracking

Weeks 11–12: Institutionalise and expand

-

store scripts and documentation centrally

-

embed tests into the audit plan

-

train internal staff

-

identify next two tests for expansion

-

select one continuous monitoring candidate

At 90 days, analytics should be operational, not experimental.

6) What “analytics-first audit” looks like in a real engagement

A traditional audit often begins with:

-

process walkthroughs

-

sampling plan

-

document request lists

-

manual testing

An analytics-first audit begins with:

-

data extraction

-

automated tests to identify exceptions

-

targeted fieldwork focused on anomalies

-

faster root cause identification

-

more persuasive reporting (data-backed)

This approach:

-

compresses cycle time

-

increases coverage

-

improves quality

-

strengthens credibility

It also changes stakeholder perception: Internal Audit becomes a source of actionable intelligence, not just compliance findings.

7) Analytics test examples that work well in the Caribbean

Accounts Payable / Payments

-

duplicate invoices and duplicate payments

-

same invoice number across vendors

-

weekend or after-hours payments

-

payments without matching PO/GRN (where applicable)

-

vendor bank account duplicates

-

unusual vendor spikes or dormant vendor activity

Payroll / HR

-

bank account duplicates across employees

-

ghost employee indicators (no attendance, unusual patterns)

-

overtime anomalies by department

-

rate overrides and pay adjustments

-

unusual allowances or benefits patterns

-

terminated employee payments

Revenue / Billing

-

credit note and refund patterns

-

discount override spikes

-

unusual pricing exceptions

-

negative margin flags

-

unusual voids/cancellations

-

revenue timing anomalies

Procurement

-

threshold splitting (multiple POs below approval limits)

-

vendor concentration and dependency

-

sole-source patterns

-

late approvals or approvals after receipt

-

conflict-of-interest indicators (where data exists)

Inventory

-

adjustments and write-offs trends

-

negative stock and backdated entries

-

high shrinkage locations

-

unusual movements near period-end

-

anomalies in returns and damages

These tests are valuable because they produce actionable exceptions without requiring complex AI.

8) The biggest governance risk: analytics without accountability

Analytics often surfaces uncomfortable truths:

-

leakage

-

weak approvals

-

fraud indicators

-

process bypassing

-

system weaknesses

Without governance, organisations may:

-

ignore exceptions

-

argue over data rather than fix controls

-

under-resource investigations

-

blame the tool or the auditor

That is why exception workflows and audit committee oversight matter. Analytics must connect to accountability.

9) Dawgen’s Borderless Analytics Delivery Model: why it works

Many Caribbean organisations don’t have:

-

dedicated audit data analysts

-

time to build test libraries

-

mature data governance

-

capacity to run recurring routines

Dawgen solves this through borderless delivery:

9.1 Test library + playbooks

Dawgen deploys proven test packs with:

-

data requirements

-

scripts

-

exception rules

-

reporting templates

-

interpretation guidance

9.2 Pods and specialists

A typical analytics pod includes:

-

Audit Manager

-

Senior Auditor

-

Data/Analytics Specialist

-

Engagement Lead oversight

9.3 Co-sourcing with capability transfer

Dawgen can build the analytics engine while training your team—so analytics becomes internalised, not outsourced permanently.

9.4 Integration with audit plan and reporting

Because the model is tied to IAVANTAGE™, analytics is embedded into:

-

planning (risk signals)

-

execution (exception-driven audits)

-

reporting (decision-grade dashboards)

-

follow-up (remediation and continuous monitoring candidates)

10) A board-ready dashboard for audit analytics

Boards don’t need raw outputs. They need a decision view:

-

number and value of exceptions

-

high-risk themes (e.g., payments, access, overrides)

-

root cause patterns (policy gaps, control failures, system config)

-

remediation commitments with dates

-

trend: improving/stable/deteriorating

-

continuous monitoring candidates

This makes analytics visible and sustainable—because it becomes part of governance.

Analytics sticks when it becomes routine

Analytics is not a tool problem. It is an operating model problem.

Analytics sticks when:

-

tests are repeatable

-

data onboarding is defined

-

exceptions flow into action

-

evidence is audit-grade

-

outcomes are measured

That is what Borderless Assurance means in analytics: faster, broader, more credible assurance—delivered through IAVANTAGE™ and enabled by Dawgen’s digital, borderless model.

Next Step!

If you want audit analytics that delivers measurable value (and doesn’t fade after a pilot), start with a Dawgen Analytics That Stick Starter Program:

-

select first 3 high-value tests

-

define data onboarding protocol

-

deploy repeatable scripts + exception workflow

-

produce board-ready dashboard

-

embed routines into your audit plan

-

option to deploy a borderless analytics pod (co-sourced / outsourced / hybrid)

🔗 Contact form: https://www.dawgen.global/contact-us/

📧 Email: [email protected]

📞 Caribbean: 876-9293670 | 876-9293870

📞 💬 WhatsApp Global: +1 555 795 9071

About Dawgen Global

“Embrace BIG FIRM capabilities without the big firm price at Dawgen Global, your committed partner in carving a pathway to continual progress in the vibrant Caribbean region. Our integrated, multidisciplinary approach is finely tuned to address the unique intricacies and lucrative prospects that the region has to offer. Offering a rich array of services, including audit, accounting, tax, IT, HR, risk management, and more, we facilitate smarter and more effective decisions that set the stage for unprecedented triumphs. Let’s collaborate and craft a future where every decision is a steppingstone to greater success. Reach out to explore a partnership that promises not just growth but a future beaming with opportunities and achievements.

Join hands with Dawgen Global. Together, let’s venture into a future brimming with opportunities and achievements